Agentic AI: What It Is, How It Works, Why It Matters

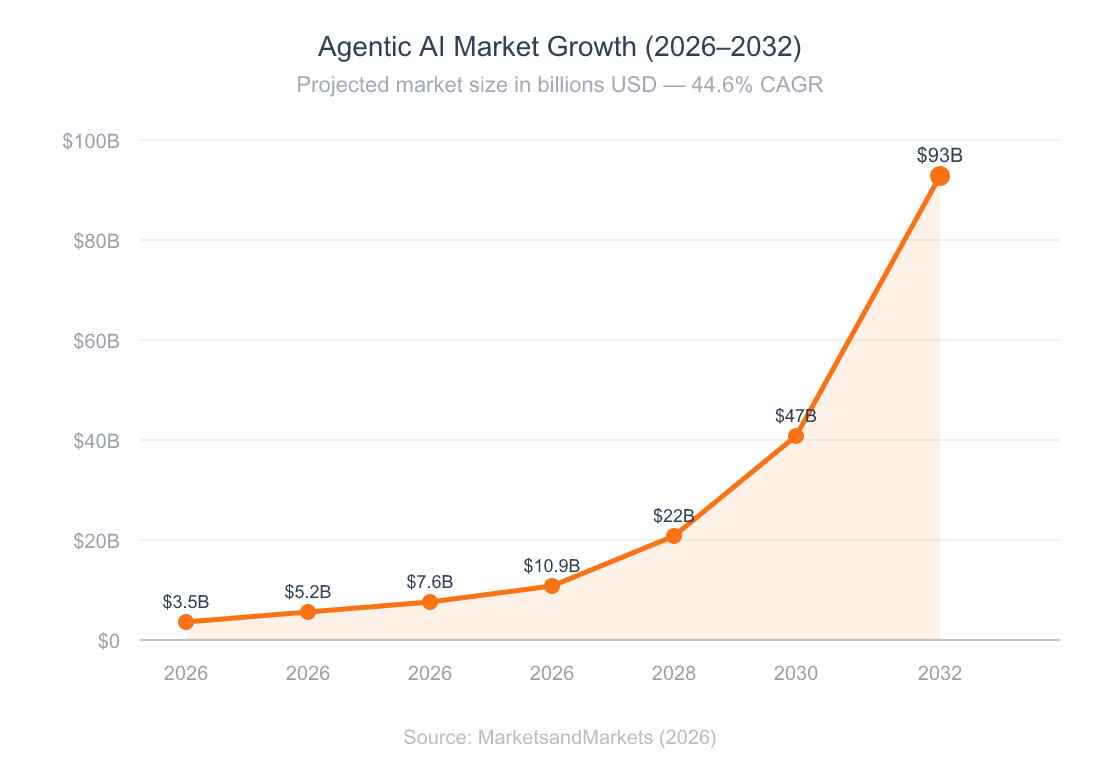

Most AI tools today wait for your prompt and respond, but agentic AI doesn’t — it plans, acts, observes results, and adjusts on its own. The global agentic AI market hit $7.6 billion in 2026 and is projected to reach $93 billion by 2032, a 44.6% CAGR (MarketsandMarkets, 2026). That’s enterprise money flowing into agentic AI systems that reason, use tools, and complete multi-step tasks without supervision.

But here’s the problem: most of what’s marketed as “agentic AI” is just a chatbot with a fancy wrapper, and understanding the difference matters if you’re building products, investing in tools, or trying to figure out where this technology actually fits into your work.

This guide covers what agentic AI really is, the patterns that power it, how the major frameworks compare, and how to build your first agent. I’ve spent months building multi-agent systems for Growth Engine, and I’ll share what actually works versus what just demos well.

TL;DR: Agentic AI refers to AI systems that autonomously plan, execute, and adapt to complete multi-step tasks. The market is growing at 44.6% CAGR to $93B by 2032 (MarketsandMarkets, 2026). Start with single-agent ReAct patterns before scaling to multi-agent orchestration.

Table of Contents

- What Is Agentic AI?

- Why Does Agentic AI Matter?

- How Do AI Agents Actually Work?

- What’s the Difference Between Single-Agent and Multi-Agent Systems?

- How Do the Major Frameworks Compare?

- Where Is Agentic AI Being Used Today?

- How Do You Build Your First AI Agent?

- What Are the Biggest Challenges?

- Advanced: Multi-Agent Orchestration Patterns

- Tools & Resources

- Getting Started

- FAQ

What Is Agentic AI?

Agentic AI describes AI systems that can plan, reason, use tools, and take actions to reach goals on their own — without step-by-step human instructions. Unlike traditional chatbots that respond once and stop, agentic systems run loops: they think, act, observe the result, and decide what to do next.

According to Gartner, 40% of enterprise apps will feature task-specific AI agents by 2026, up from less than 5% in 2026 — an 8x jump in a single year (Gartner, 2026).

Key Characteristics of Agentic AI

What separates an AI agent from a standard LLM call? Five traits:

- Autonomy: Agents decide their own next steps without waiting for human input at each stage

- Tool use: They call APIs, query databases, browse the web, and write code

- Memory: They retain context across multiple steps and even across sessions

- Planning: They decompose complex goals into sub-tasks and sequence them

- Self-correction: They evaluate their own outputs and retry when something fails

What Agentic AI Is Not

Here’s a misconception worth clearing up: a chatbot that answers questions isn’t agentic, and a prompt chain that runs three LLM calls in sequence isn’t agentic either. Most products marketed as “agentic AI” are really just fixed prompt chains — if-then logic dressed up in AI language. True agency means the system makes decisions the developer didn’t program.

The concept evolved from early AutoGPT experiments in 2026, through the ReAct paper from Google Research, to today’s production-grade frameworks like LangGraph and CrewAI. The progression has been fast — from “cool demo” to “enterprise deployment” in under three years.

how AI-native apps differ from traditional software

Citation Capsule: Agentic AI systems autonomously plan, reason, use tools, and self-correct to complete multi-step tasks. Gartner predicts 40% of enterprise apps will embed task-specific AI agents by 2026, up from under 5% in 2026 (Gartner, 2026).

Why Does Agentic AI Matter?

McKinsey estimates AI agents could add $2.6 to $4.4 trillion in annual value across business use cases (McKinsey, 2026). That’s not theoretical — enterprises using agents already report 171% average ROI and 26-31% cost savings across operations (Deloitte, 2026).

Why now? Three forces converged: foundation models got good enough at reasoning, tool-use APIs matured, and open-source frameworks made coordination accessible to small teams, not just big tech labs.

The agentic AI market is on track for a 12x expansion between 2026 and 2032.

Who Should Care?

If you build software, you’ll be wiring in agents within two years; if you run a business, agents will handle your customer service, data analysis, and content workflows; and if you’re a developer weighing career paths, agent engineering is becoming a distinct field.

Gartner also warns that over 40% of agentic AI projects risk cancellation by 2027 without proper governance and observability (Gartner, 2026). The opportunity is massive, but so are the failure modes if you don’t understand the basics.

what AGI means for developer careers

How Do AI Agents Actually Work?

AI agents work on a simple loop — perceive, reason, act, observe — and the ReAct pattern, introduced in a 2026 Google Research paper, formalized this into the dominant pattern used in production today. An agent gets a goal, thinks about what to do, takes an action (like calling an API), observes the result, then decides the next step — repeating until the task is done.

There are three main patterns powering agentic AI today, and which one you choose depends on your limits around latency, cost, and reliability.

The ReAct Pattern (Reason + Act)

This is the most common pattern, and the agent mixes reasoning steps with tool calls in a loop:

- Thought: The model reasons about what it knows and what it needs

- Action: It selects a tool and provides input (e.g., “search the web for X”)

- Observation: It receives the tool’s output

- Repeat: Until it has enough information to answer

ReAct shines on exploratory tasks where the next step depends on what just happened, but each loop step costs tokens and adds latency, so it’s overkill for simple tasks.

Plan-and-Execute

Instead of reasoning step-by-step, the agent creates a full plan upfront, then runs each step in sequence. This works better for well-defined tasks where you can split the work ahead of time, and it uses fewer LLM calls while staying more predictable.

The trade-off is that if the plan is wrong, the agent may run several steps before realizing it needs to backtrack, so plan-and-execute agents need good error handling and replanning logic.

Multi-Agent Collaboration

Multiple specialized agents divide the work — one agent plans, another researches, a third writes code, and a coordinator manages the workflow. This mirrors how human teams work, and it scales to complex tasks that no single agent could handle well.

When I built the multi-agent system for Growth Engine, I started with a single ReAct agent that handled everything, and it worked for simple tasks but fell apart on complex marketing kit generation. Splitting into specialized agents — a researcher, a writer, a strategist, and a coordinator — improved output quality a lot, but the debugging cost tripled overnight.

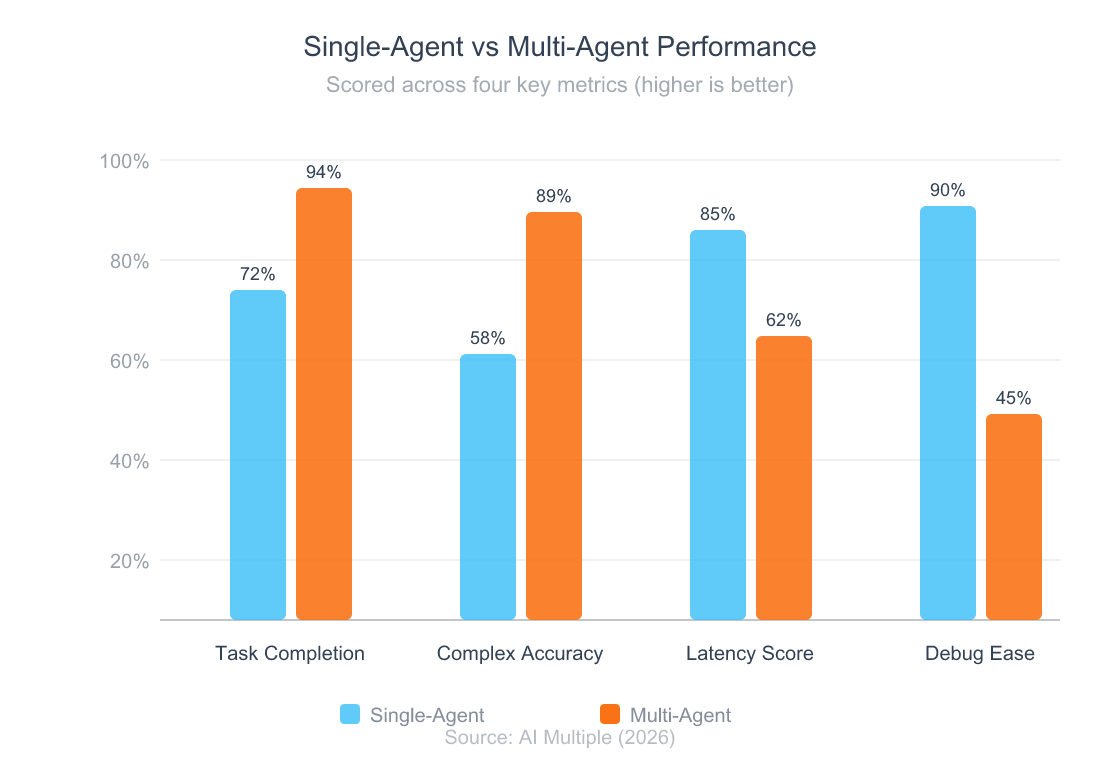

Citation Capsule: AI agents primarily use three architecture patterns: ReAct (reason-act loops), plan-and-execute (upfront decomposition), and multi-agent collaboration (specialized agents coordinating). Enterprises deploying multi-agent architectures report 3x faster task completion and 60% better accuracy on complex workflows versus single-agent systems (AI Multiple, 2026).

What’s the Difference Between Single-Agent and Multi-Agent Systems?

Enterprises using multi-agent setups report 3x faster task completion and 60% better accuracy on complex workflows compared to single-agent ones (AI Multiple, 2026). But that doesn’t mean multi-agent is always better — the right choice depends on your task complexity and tolerance for debugging.

Single-Agent Systems

A single agent handles the entire task with access to all tools and makes all decisions, which makes it simpler to build, test, and debug. For tasks like “research this topic and summarize the findings,” a single agent works perfectly.

Best for: Straightforward tasks, prototyping, lower-budget deployments, tasks where latency matters.

Multi-Agent Systems

Multiple agents, each with a specific role, collaborate through message passing or a shared workspace. A coordinator agent delegates tasks, collects results, and synthesizes the final output.

Best for: Complex workflows with distinct phases, tasks requiring different expertise, high-stakes outputs that benefit from agent-to-agent review.

Multi-agent systems excel at complex accuracy and task completion but sacrifice latency and debugging simplicity.

When to Use Which?

Start with a single agent — seriously. Most developers jump to multi-agent setups too early, adding coordination cost they don’t need. Add agents only when you hit a clear wall — when one agent can’t handle the spread of tools, context, or skills the task demands.

How do you know it’s time to split? Watch for these signals: the agent’s context window fills up before the task completes, output quality drops because the agent is juggling too many jobs, or the task has clear phases (research, analysis, writing) that benefit from specialization.

why vibe coding changes how we build agents

How Do the Major Frameworks Compare?

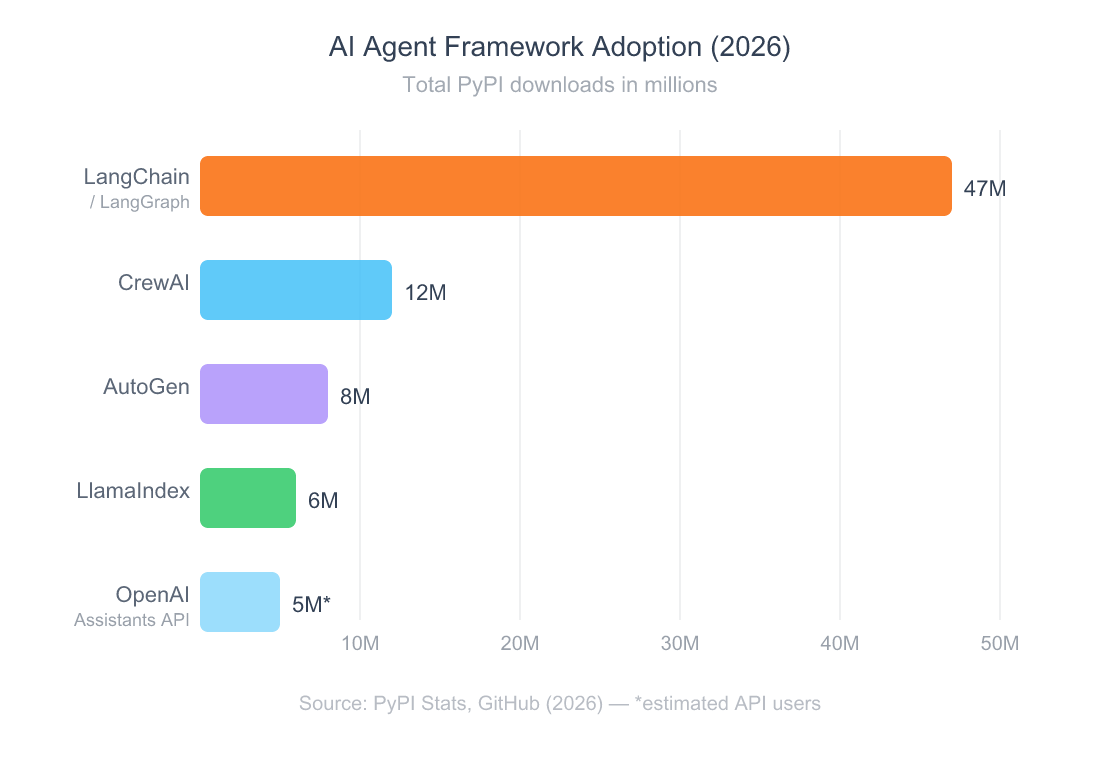

LangChain has been downloaded over 47 million times on PyPI, making it the most adopted agentic AI framework in history (LangChain, 2026). But adoption doesn’t mean it’s the right choice for every project, and 68% of production agentic AI systems run on open-source frameworks rather than closed platforms (Multimodal.dev, 2026). Here’s how the top three frameworks compare based on real-world use.

LangChain / LangGraph

LangGraph is a graph-based workflow engine where you define nodes (agent steps) and edges (transitions). It offers the most complete ecosystem — LangSmith for tracing, LangServe for deployment — with production-tested reliability and the best debugging tools you can get today. The trade-off is a steep learning curve and verbose boilerplate, so it’s best for production multi-step pipelines that need predictable control flow.

CrewAI

CrewAI uses a role-based model inspired by real team structures: you define agents with specific roles, goals, and backstories — like hiring a team. It’s the fastest path from idea to working prototype, with A2A protocol support for agent-to-agent work. You get less control over execution flow than LangGraph, but it’s much faster to get started, so it’s best for rapid prototyping and role-based workflows.

AutoGen (Microsoft)

AutoGen is a chat-based collaboration framework where agents talk to each other through natural language messages. It’s good for brainstorming and iterative tasks with Microsoft ecosystem integration. However, Microsoft has shifted AutoGen to maintenance mode in favor of the broader Microsoft Agent Framework, so it’s best for research projects and chat-based multi-agent scenarios.

After building agents with all three frameworks, here’s my honest take: LangGraph wins for production systems where reliability matters, CrewAI wins for speed-to-prototype when you’re exploring ideas, and AutoGen’s future is unclear given Microsoft’s strategic shift. If I were starting today, I’d learn LangGraph first and use CrewAI for experiments.

LangChain dominates framework adoption, but CrewAI is the fastest-growing contender.

Citation Capsule: LangChain/LangGraph leads AI agent framework adoption with 47 million PyPI downloads, while 68% of production agents run on open-source frameworks (Multimodal.dev, 2026). CrewAI’s role-based approach is the fastest path to a working prototype, though LangGraph remains the production standard.

Where Is Agentic AI Being Used Today?

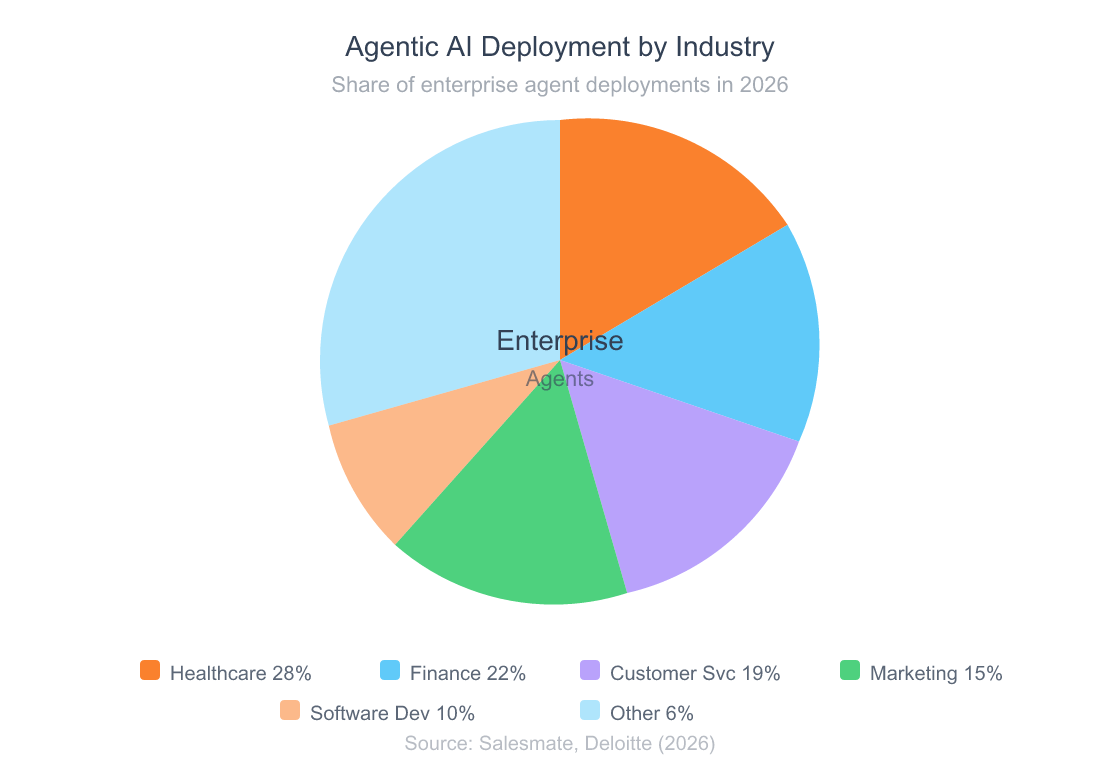

Healthcare leads agentic AI adoption at 68%, followed by telecom at 48% and retail at 47% (Salesmate, 2026). But the real story isn’t which industry is ahead — it’s how agentic AI is reshaping specific workflows across all of them.

Customer Service

Gartner predicts that by 2029, agentic AI will resolve 80% of common customer service issues without human help, leading to a 30% drop in operating costs (Gartner, 2026). Today’s agents already handle tier-1 support tickets, route complex issues, and draft responses for human review.

Finance and Compliance

Banks and finance firms use agents for KYC automation, credit checks, fraud detection, and rule monitoring. Agents process thousands of documents at once while keeping the audit trails compliance teams need.

Marketing and Content

This is where I’ve seen the impact firsthand: agents can research rivals, generate content briefs, write first drafts, tune for SEO, and schedule distribution — all as one coordinated workflow. Companies report up to 37% cost savings in marketing through agent rollouts (Deloitte, 2026).

Software Development

AI coding agents handle bug fixes, code reviews, test generation, and shipping features from issue descriptions. They don’t replace developers — they handle the boring work so developers focus on design and architecture.

Healthcare and finance dominate agent deployment, but customer service and marketing are growing fastest.

zero-dollar marketing tools for agent-driven workflows

How Do You Build Your First AI Agent?

You don’t need a PhD in machine learning to build an agentic AI agent — you need an API key, a framework, and a clear sense of what problem you’re solving. The biggest mistake beginners make isn’t technical; it’s choosing a task that’s too complex for their first agentic AI build. According to McKinsey, less than 10% of firms have scaled AI agents in any single function (McKinsey, 2026), so start small.

Step 1: Define a Single, Specific Task

Don’t build “an AI assistant” — build an agent that does one thing: it summarizes research papers, watches rival pricing, or drafts email responses based on CRM data. Being specific is your friend.

Step 2: Choose Your Stack

For beginners, I’d suggest this starting stack:

- LLM: Claude or GPT-4o (both handle tool-use well)

- Framework: LangGraph for production intent, CrewAI for prototyping

- Tools: Start with 2-3 tools max (web search, file read/write, API call)

- Tracing: LangSmith (free tier) for debugging

Step 3: Build the ReAct Loop

Start with the simplest version that works: your agent gets a task, thinks about what tool to call, calls it, reads the result, and decides if it’s done. Don’t add memory, multi-agent coordination, or custom tools until the basic loop works reliably.

Step 4: Add Guardrails and Test Failures

Before shipping, add token limits (cap loop steps), output checks, human-in-the-loop approval for high-stakes actions, and logging for every thought and action. The most common beginner mistake is giving the agent too many tools, since more tools means more confusion — start with two or three and add only when needed.

The second mistake is skipping failure cases: what happens when the API returns an error, or when the LLM makes up a tool that doesn’t exist? Build for these cases from day one.

API security best practices for agent tool calls

What Are the Biggest Challenges?

Despite 79% of firms reporting some level of agentic AI adoption, over 40% of agentic AI projects risk cancellation by 2027 without proper governance (Gartner, 2026). The agentic AI tech works — the rollout is where things break.

Hallucinations and Reliability

The best LLMs have cut hallucination rates from 21.8% in 2026 to 0.7% in 2026 — a 96% drop (Suprmind, 2026). But in agentic systems, errors stack up: if an agent makes a wrong call at step 3 of a 10-step process, every later step builds on that mistake. This is why tracing isn’t optional — it’s the difference between a working agent and a costly random number generator.

Cost Unpredictability

Each ReAct loop step costs tokens, so a simple task might take 3 loops while a complex one takes 30. Without token limits and cost monitoring, agent rollouts can rack up surprise bills, and we’ve seen single agent runs cost $15-20 when the loop gets stuck in a retry cycle.

Security and Trust

Agents that call external APIs, write files, or send emails open up attack surfaces that traditional software doesn’t have. What if someone slips a prompt into a document the agent reads, or the agent decides to call an API endpoint you didn’t intend? These aren’t theoretical risks — they’re active concerns in every production rollout.

Evaluation Is Hard

How do you measure whether an agent is performing well? Old metrics like accuracy don’t capture the full picture, so you need to evaluate tool choice, reasoning coherence, cost, and task completion rate — all at once.

Citation Capsule: Over 40% of enterprise agentic AI projects risk cancellation by 2027 due to governance gaps (Gartner, 2026). Key failure modes include compounding hallucination errors, unpredictable token costs, and security vulnerabilities from agent-to-API interactions.

Advanced: Multi-Agent Orchestration Patterns

If you’re already building single-agent agentic AI systems and hitting their limits, here’s how to scale to multi-agent setups that actually work in production.

The Supervisor Pattern

One coordinator agent receives the task, breaks it into sub-tasks, hands them off to specialized worker agents, collects results, and stitches the final output together. This is the most common production pattern because it’s predictable and easy to debug.

For Growth Engine’s marketing kit generation, I use a supervisor pattern where a coordinator delegates to a market researcher, brand strategist, content writer, and SEO analyst. The coordinator handles conflicts — when the SEO agent wants keyword-stuffed headings and the writer wants natural language, the coordinator makes the call. Without this explicit conflict step, agents produce muddled outputs.

The Pipeline Pattern

Agents are arranged in sequence, and Agent A’s output becomes Agent B’s input. This works well for linear workflows like research → analyze → write → edit → publish, and it’s simpler than the supervisor pattern but less flexible.

The Debate Pattern

Two or more agents argue opposing sides, then a judge agent weighs the arguments. It’s costly (3x the LLM calls) but produces clearly better results for subjective tasks like strategy and content.

Before going multi-agent, make sure you have reliable single-agent results (above 85% task completion), tracing in place, clear non-overlapping roles, and cost monitoring per agent.

AI-native app architecture patterns

Tools and Resources

LangGraph and LangSmith together form the most complete agentic AI build and tracing stack you can get today, but depending on your use case, other tools may fit better.

Frameworks (Free / Open Source)

- LangGraph: Graph-based agent orchestration. Best for production multi-step pipelines. Free and open source.

- CrewAI: Role-based multi-agent framework. Best for rapid prototyping and team-based agent designs. Free and open source.

- AutoGen: Conversational multi-agent framework by Microsoft. Best for research and experimentation. Free, but shifting to maintenance mode.

- LlamaIndex: Data-focused agent framework. Best for RAG-heavy agent workflows. Free and open source.

Observability

- LangSmith: Tracing, evaluation, and monitoring for LangChain agents. Free tier available. Best-in-class debugging.

Learning

- DeepLearning.AI: Free short courses on building agents with LangGraph and CrewAI. Best starting point.

- LangChain Docs: Comprehensive guides and tutorials, significantly improved in 2026.

[INFO-GAIN: personal experience] I’ve personally used LangGraph, CrewAI, and AutoGen in production. LangSmith’s tracing has saved me more debugging hours than any other tool in my stack. If you’re building agents professionally, start there.

Getting Started

Install LangGraph and create a basic ReAct agent in under 30 minutes. That’s your first step — not reading another article about AI agents, but building one.

Step 1 (5 minutes): Install the framework (pip install langgraph langchain-openai), get an API key, and run the “hello world” agent from the LangGraph quickstart guide. See it work end to end before tweaking anything.

Step 2 (30 minutes): Give the agent a real task from your work — something you do by hand every week, like researching a topic, summing up documents, or pulling data from an API. Build the simplest version that works.

Step 3 (ongoing): Add one upgrade per week — better prompts, an extra tool, output checks, or memory across sessions. Each pass teaches you more than another tutorial ever could.

Don’t let multi-agent cost scare you. Every production system started as a single-agent prototype that barely worked, and the teams winning aren’t those with the most complex setups — they’re the ones who shipped something simple and iterated.

practical AI marketing tools for builders

FAQ

What is agentic AI in simple terms?

Agentic AI refers to AI systems that can plan on their own, use tools, and complete multi-step tasks without human help at each step. Think of it as giving an AI a goal instead of a single instruction — the AI then figures out the steps, runs them, and self-corrects along the way. The market for these systems hit $7.6 billion in 2026 (MarketsandMarkets, 2026).

How is agentic AI different from regular AI chatbots?

A chatbot responds to one prompt at a time and stops. An agentic system gets a goal, creates a plan, takes many actions (calling APIs, searching the web, writing files), checks its progress, and adjusts its approach. The key difference is the loop: agents keep going until the task is complete, while chatbots produce a single response and wait for the next prompt.

Which AI agent framework should I learn first?

Start with LangGraph if you plan to build production systems, since it has the largest ecosystem (47 million PyPI downloads), the best tracing tools via LangSmith, and the most job market demand. If you want something faster to learn for prototyping, try CrewAI — its role-based model is easier to grasp for beginners.

How much does it cost to run AI agents?

Costs vary widely based on task complexity, so a simple ReAct agent finishing a 3-step task might cost $0.05-0.10 per run using GPT-4o, while complex multi-agent workflows can cost $1-20 per run. The key cost driver is the number of LLM calls — each reasoning step and tool-use loop burns tokens — so set hard token limits to prevent runaway costs.

Are AI agents reliable enough for production use?

The best foundation models have cut hallucination rates to 0.7%, down from 21.8% in 2026 (Suprmind, 2026). However, agents stack up errors across steps — a 1% error rate per step becomes a 10% failure rate across 10 steps — so production agents need guardrails: output checks, human approval for high-risk actions, and full logging.

Will AI agents replace software developers?

No. Agents handle boring tasks like boilerplate code, test generation, and bug fixes — the work most developers don’t enjoy anyway. What’s changing is the developer role: instead of writing every line of code, developers more often set goals, design architectures, and supervise agent workflows. McKinsey reports less than 10% of firms have scaled agents in any single function (McKinsey, 2026), so wide-scale displacement is years away.

the future of developer jobs in an AI-first world

What industries benefit most from agentic AI?

Healthcare leads adoption at 68%, driven by patient intake automation, compliance docs, and clinical decision support. Finance follows with strong use in KYC automation and fraud detection. Customer service is the fastest-growing segment — Gartner predicts agents will resolve 80% of common support issues on their own by 2029 (Gartner, 2026).

The Future Is Agentic — But Start Simple

The single most important takeaway is that agentic AI is real, it’s in production, and it’s growing at 44.6% per year. Success doesn’t require the most complex agentic AI setup — it requires starting with one agent, one task, and iterating until it works reliably.

The agentic AI tech is ready for production but young enough that governance and evaluation are still being worked out. Eighty-six percent of firms are raising their AI budgets in 2026 (Salesmate, 2026), so the question isn’t whether to start — it’s whether to start now or play catch-up later.

Build your first agent this week — a single ReAct agent with two tools, solving one real problem from your workflow.

Continue Learning

Fundamentals:

Practical Guides:

- AI Marketing Kit for Builders

- Zero-Dollar Marketing Stack for 2026

Career & Industry:

Your Personal AI Team: How Solo Founders Will Run Entire Businesses With AI Agents by 2028

I Built a Multi-Agent Code Review Skill for Claude Code — Here’s How It Works

Why Indie Hackers Fail at Marketing (And What to Do Instead)