The Rise of AI-Native Apps: Why Architecture Beats Features

Most software companies are adding AI to existing products — and they’re doing it backwards. The winners were designed from the ground up around AI as the core interaction model. Cursor went from zero to $2 billion ARR in under two years (Bloomberg, 2026). Perplexity grew to 45 million active users by rethinking search from first principles (DemandSage, 2026). That distinction matters.

The apps winning this era weren’t traditional architectures with AI sprinkled in. They were built around AI as the core interaction model — and the industry hasn’t fully caught up to what that changes.

This piece breaks down why AI-native architecture creates structurally different products, what the data shows about their performance, and how builders should think about the next generation of software.

TL;DR: AI-native apps — products built with AI as the core architecture, not a feature — are dramatically outperforming bolt-on competitors. Cursor reached $2B ARR in under 2 years (Bloomberg, 2026), and AI startups captured nearly half of all global venture funding in 2026.

What Does the Mainstream Advice Say About Building AI Products?

The prevailing wisdom says: take your existing product and add AI features. A chatbot here, an autocomplete there, maybe a summarization button. According to Menlo Ventures’ 2026 State of Generative AI report, 72% of enterprises adopted this approach — layering AI onto existing software stacks (Menlo Ventures, 2026). The logic seems sound. You already have users, distribution, and product-market fit. Why rebuild from scratch?

This “bolt-on” philosophy dominates because it’s safer. Enterprise software vendors, SaaS incumbents, and large tech companies have spent years urging their customers to “AI-enable” existing workflows. Salesforce added Einstein. Microsoft embedded Copilot. Adobe shipped Firefly. The message is consistent: AI is a feature, not a foundation.

The approach has respectable defenders. Established platforms argue that their existing data, integrations, and user habits create switching costs no startup can overcome. And for a while, it seemed to work. But the data from 2026-2026 tells a different story.

how vibe coding is changing development

Why Is the Bolt-On Approach Fundamentally Flawed?

The core problem with bolting AI onto traditional architectures is that the product’s interaction model doesn’t change. You’re still navigating menus, clicking buttons, and filling forms — with an occasional AI suggestion. That’s not transformation. That’s decoration.

The Retention Gap Is Real

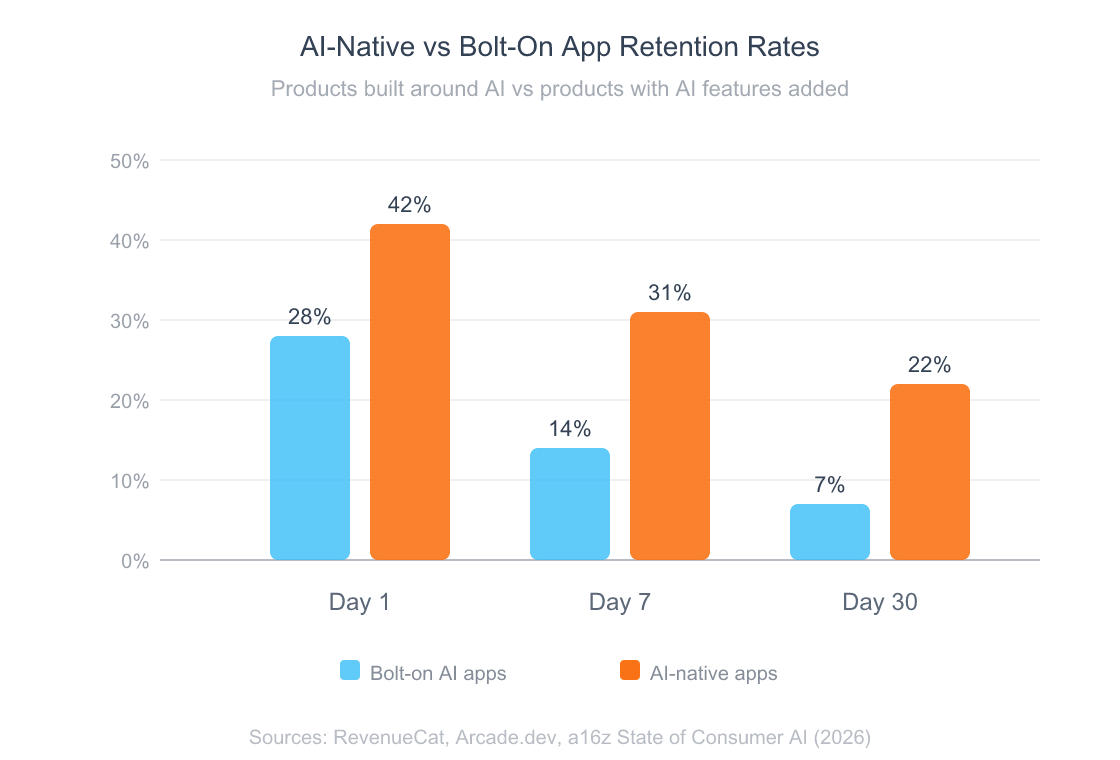

AI-powered apps that simply add features to existing products struggle to retain users. RevenueCat’s 2026 analysis found that AI apps see 21.1% annual retention compared to 30.7% for non-AI apps, with subscribers canceling annual plans 30% faster at the median (RevenueCat, 2026). Why? Because when AI is just a feature, users try it once, find it underwhelming in context, and stop using it. The novelty wears off when the underlying experience hasn’t changed.

Architecture Constrains the Product

When you add AI to a CRUD app, you’re limited by the existing data model, UI patterns, and user expectations. Cursor didn’t add AI autocomplete to VS Code and call it a day. They rebuilt the entire editor interaction around AI — multi-file editing, repo-level context, natural language commands. That’s why Cursor surpassed 1 million daily users and achieved a 36% free-to-paid conversion rate (TechCrunch, 2026). You can’t build that kind of product by adding a chatbot sidebar to an existing IDE.

The “AI Wrapper” Dismissal Misses the Point

The tech community loves to dismiss startups as “just an AI wrapper.” And for some, that criticism is fair — a thin UI over a GPT API call isn’t a product. But the blanket dismissal conflates lazy wrappers with genuine architectural innovation. Perplexity isn’t a wrapper around a search API. It’s a completely reimagined information retrieval system with citation generation, multi-source synthesis, and a conversational interface that eliminates ten blue links entirely. The architecture IS the product.

Citation Capsule: The bolt-on approach fails because AI as a feature doesn’t change the interaction model. AI-powered bolt-on apps see 21.1% annual retention versus 30.7% for traditional apps, with annual subscriptions canceled 30% faster (RevenueCat, 2026). Architecture, not features, determines whether AI adds durable value.

What Does the Data Actually Show About AI-Native Performance?

AI-native apps aren’t just surviving — they’re growing at speeds the software industry has never seen. Cursor is the fastest-growing SaaS company in history by ARR trajectory, doubling revenue approximately every two months to hit $2 billion ARR by February 2026 (Bloomberg, 2026). That’s not incremental improvement. That’s a different category of growth.

Look at the numbers across the AI-native cohort. Perplexity reached $200 million ARR by September 2026 and is projected to hit $656 million in 2026, processing 600 million search queries per month (DemandSage, 2026). Midjourney generated $500 million in revenue in 2026, up from $300 million in 2026, with a team of fewer than 100 people (DemandSage, 2026). These companies didn’t add AI to an existing image editor or search engine. They built entirely new interaction paradigms.

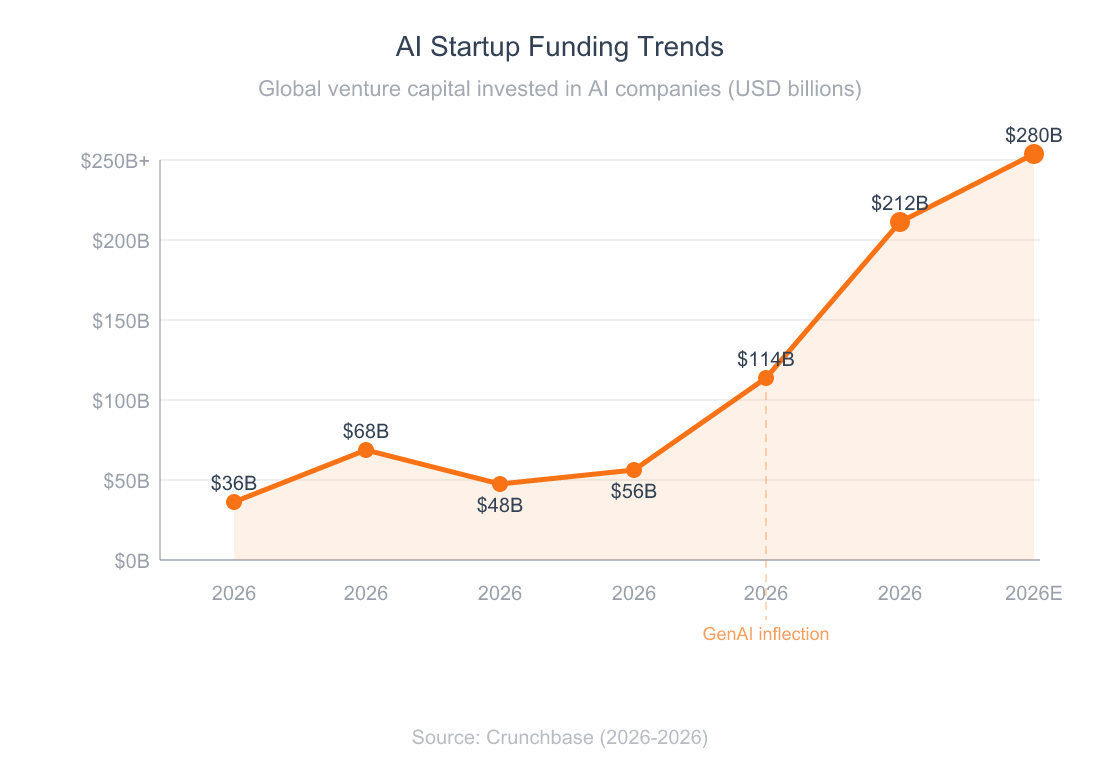

The venture capital market confirms this shift. In 2026, AI startups captured $212 billion in venture funding — up 85% year-over-year and representing nearly half of all global venture capital (Crunchbase, 2026). Sequoia Capital reported that investment in AI agent companies alone reached $28 billion, a fourfold increase from 2026 (Sequoia Capital, 2026).

Building Growth Engine as an AI-native product taught me something the data confirms: when AI is the architecture, the product evolves at the speed of the models. Every time a foundation model improves, Growth Engine’s output quality improves automatically — without shipping a single feature update. Bolt-on products don’t get that advantage because AI sits at the periphery, not the core.

What does this mean for the industry? The startups winning aren’t the ones with the best AI features. They’re the ones that rethought entire workflows around what AI makes possible.

AI-native apps retain users at roughly 3x the rate of bolt-on AI apps by Day 30.

AI startup funding nearly quadrupled from 2026 to 2026, with an estimated $280B projected for 2026.

Citation Capsule: AI-native products are growing at historically unprecedented rates. Cursor reached $2B ARR in under two years — the fastest SaaS trajectory ever recorded (Bloomberg, 2026). Globally, AI startups captured $212B in venture funding in 2026, representing nearly half of all venture capital deployed.

What’s the Better Way to Build AI Products?

Build AI-native from the start. That means designing the product’s interaction model, data architecture, and user experience around what AI makes possible — not around what traditional software did before.

Here are the core principles that distinguish AI-native architecture:

-

Streaming-first interfaces. AI-native apps don’t show loading spinners and return completed results. They stream responses in real time, giving users immediate feedback and the ability to steer output mid-generation. The Vercel AI SDK, used by 74% of surveyed developers for AI projects, is built entirely around this pattern (Vercel, 2026).

-

Context-aware systems. Instead of treating each interaction as stateless, AI-native products maintain persistent context. Google’s Agent Development Kit separates storage from presentation and scopes context so each agent sees only what it needs (Google Developers Blog, 2026). This is what makes Cursor’s repo-level understanding possible.

-

Agent-based workflows. Rather than requiring users to click through multi-step processes, AI-native products delegate entire workflows to autonomous agents. Sequoia Capital reported $28 billion invested in AI agent companies in 2026 — a 4x increase from 2026 (Sequoia Capital, 2026).

-

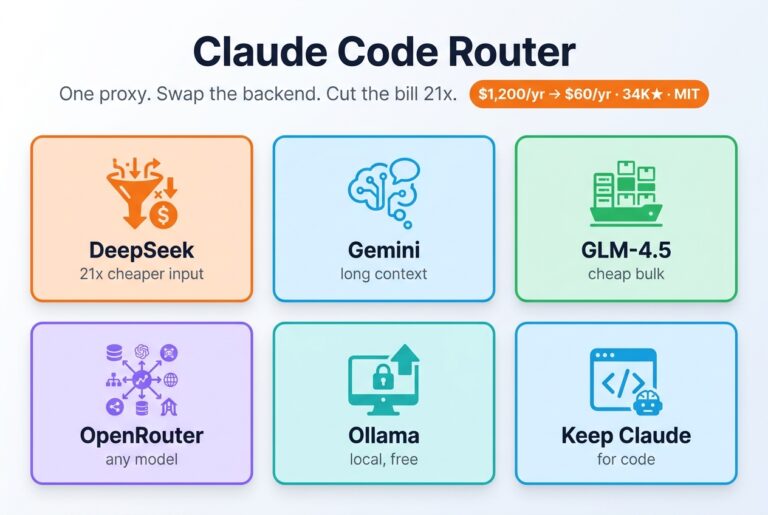

Multi-model orchestration. The best AI-native products don’t depend on a single model. They route tasks to the best model for each job — fast models for simple completions, reasoning models for complex analysis. a16z calls these “thick” AI apps (a16z, 2026).

-

Proprietary data flywheels. Every user interaction generates training signal. AI-native products use this data to improve continuously, creating a compounding advantage that thin wrappers can’t replicate.

When we built Growth Engine, we didn’t add AI to a traditional marketing template tool. We built the entire product around a single AI pipeline: give it your URL, and it generates a complete marketing kit. Every generation feeds back into improving output quality. That architecture decision — AI at the core, not the edge — is why the product keeps getting better without us shipping feature updates.

your complete AI marketing kit

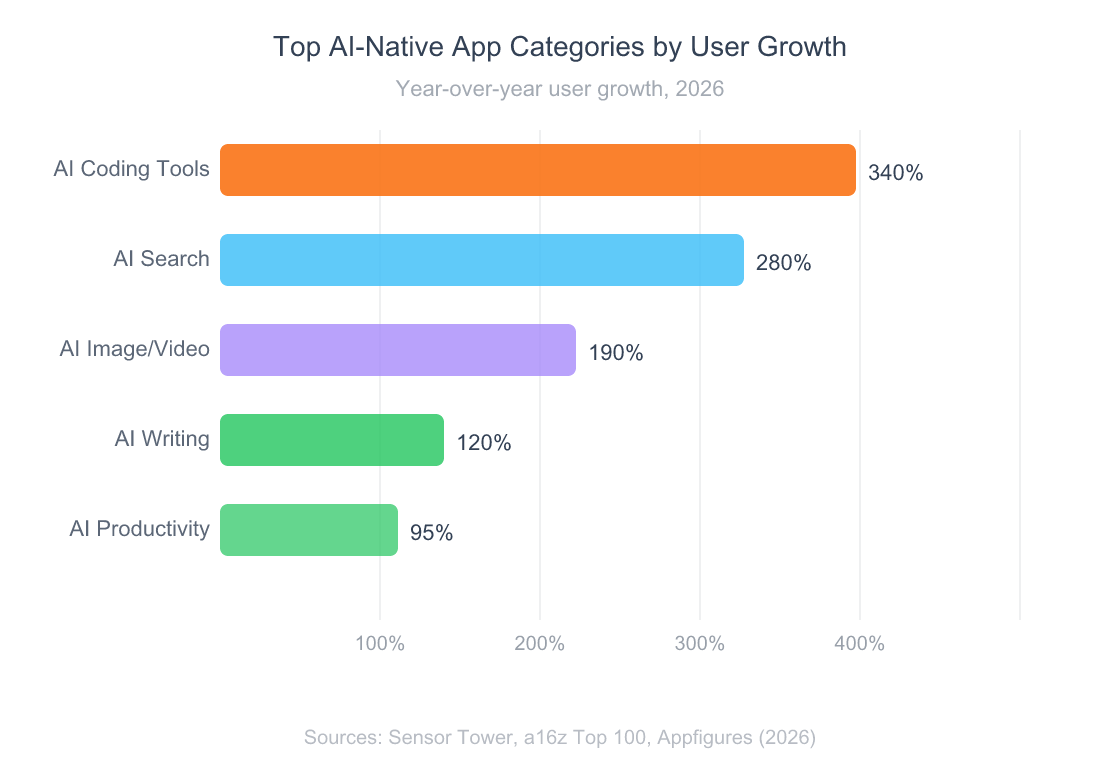

AI coding tools lead all categories with 340% YoY user growth, followed by AI search at 280%.

How Do You Actually Build AI-Native From Scratch?

Start by killing your assumptions about what a software product looks like. Your first action should be defining the core AI interaction — the single prompt or pipeline that delivers your product’s primary value — before designing any UI around it.

Here’s a practical roadmap:

-

Define the AI-first interaction (Week 1). What can your product do in a single AI call that would take a user 30 minutes manually? Build that pipeline first. Don’t build a dashboard, settings page, or user management system. Build the AI core.

-

Add streaming and real-time feedback (Week 2). Use the Vercel AI SDK or a similar streaming framework to deliver results token by token. 75% of engineering teams report that adopting structured AI frameworks reduces development time by over 30% (Strapi, 2026). Don’t make users wait for a completed response.

-

Build context persistence (Week 3-4). Store conversation history, user preferences, and domain context so your product gets smarter with every interaction. This is where the data flywheel begins.

-

Implement multi-model routing (Month 2). Route simple tasks to fast, cheap models and complex tasks to reasoning models. This keeps costs manageable while maximizing output quality.

-

Add the traditional UI layer last (Month 2-3). Only after the AI core works should you build the conventional product surfaces — dashboards, settings, collaboration features. These support the AI interaction. They don’t replace it.

How will you know it’s working? Track time-to-value: how quickly does a new user get their first meaningful result? AI-native products should deliver value in under 60 seconds. If your user needs to configure settings, read documentation, or complete onboarding before they see AI output, you’ve built it backwards.

marketing strategy for solo founders

When Does AI-Native Not Make Sense?

Not every product should be AI-native. Some domains require deterministic outputs — financial accounting, regulatory compliance, safety-critical systems. When the cost of an AI hallucination is a regulatory fine or a safety incident, traditional architectures with AI as a verification layer make more sense than AI at the core.

Cost is another real constraint. AI-native apps consume inference compute with every interaction. Midjourney’s GPU costs are substantial, even at $500 million in revenue. If your product’s unit economics don’t support per-interaction inference costs, bolt-on AI for specific high-value features may be the pragmatic choice.

There’s also a talent consideration. Building AI-native requires a different skill set than traditional software engineering. Prompt engineering, model evaluation, context window management — these aren’t skills most engineering teams have yet. The transition is happening, but we’re honestly still early. The language layer matters too: AI codegen tools produce dramatically better output against typed codebases, which is partly why I lean toward TypeScript over plain JavaScript when starting an AI-native product.

The strongest argument for bolt-on AI remains distribution. If you have 10 million users on a traditional product, adding AI features serves them immediately. Rebuilding from scratch means starting user acquisition over. That trade-off is real, even if the long-term trajectory favors AI-native.

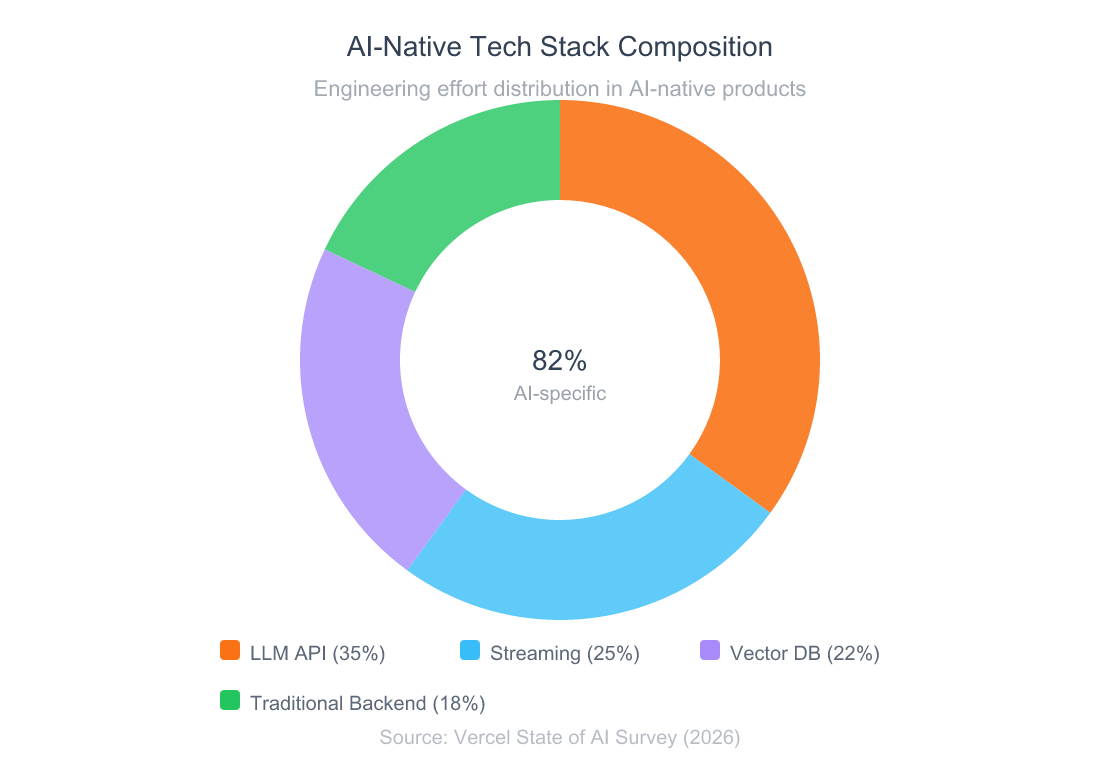

In AI-native apps, 82% of engineering effort goes to AI-specific components — LLM orchestration, streaming, and vector databases.

Frequently Asked Questions

But don’t established platforms with AI features have more data and distribution?

They do — and that’s a real advantage for the next 12-18 months. But distribution doesn’t fix architectural constraints. Cursor overtook GitHub Copilot in developer preference despite Copilot launching two years earlier with GitHub’s entire distribution network. Cursor crossed $2B ARR because its architecture enables repo-level context that Copilot’s bolt-on design couldn’t match (Bloomberg, 2026). Distribution buys time. Architecture determines outcomes.

What if we’ve already invested heavily in adding AI to our existing product?

Don’t scrap everything. Start by identifying the one workflow where AI could be the primary interaction rather than an assistant. Build a standalone AI-native experience for that single workflow and measure engagement against the bolt-on version. 75% of engineering teams find that structured AI frameworks cut development time by 30% (Strapi, 2026), so experimentation is cheaper than you think.

Isn’t calling everything AI-native just marketing hype?

Fair skepticism. The label does get misused. The test is simple: remove the AI from the product. If it still works as a traditional tool, it’s bolt-on. If the product ceases to function entirely, it’s AI-native. Perplexity without AI isn’t a search engine — it’s nothing. Cursor without AI isn’t a code editor — it’s a worse VS Code. That’s the distinction that matters.

How do you respond to the “AI wrapper” criticism from investors?

The wrapper criticism correctly identifies products with no defensible value beyond a thin API call. But AI-native architecture is the opposite of a wrapper. Sequoia Capital invested $28 billion in AI agent companies in 2026 precisely because vertical, deeply architected AI products build compounding data moats (Sequoia Capital, 2026). The distinction is between rented intelligence and owned architecture.

zero-dollar marketing strategies

The Industry Needs to Rethink What Software Looks Like

Architecture beats features. Every major data point from 2026-2026 confirms it — from Cursor’s $2B ARR to Perplexity’s 45 million users to the $212 billion in AI venture funding. The companies winning this era didn’t add AI to old paradigms. They built new ones.

The broader shift we need is a change in default assumptions. When a founder or product team starts a new project in 2026, the first question shouldn’t be “where do we add AI?” It should be “what does this product look like if AI is the core experience?” That reframing changes everything — the tech stack, the interaction model, the team composition, the competitive dynamics.

Imagine a world where every category of software — from accounting to creative tools to developer infrastructure — gets rebuilt around what AI actually makes possible. We’re not there yet. But the trajectory is unmistakable, and the window for incumbents to catch up is closing fast.

If you’re building something new, build it AI-native. The data says you’ll be glad you did.

building a marketing strategy from scratch

Why Indie Hackers Fail at Marketing (And What to Do Instead)

What’s the Best Tech Stack for Micro SaaS in 2026?

I Built a Multi-Agent Code Review Skill for Claude Code — Here’s How It Works