OpenTelemetry GenAI: A Practical Guide to Tracing AI Agents Without Leaking PII

Your agent runs three tool calls, two LLM completions, and one vector search to answer a single user question. Something is slow. Something is expensive. And one of the prompts contains a customer email address you really, really do not want sitting in a trace backend forever.

This is the new shape of “observability.” It’s not just RPC latency. It’s a tree of spans where the leaves carry user content, the costs are token-denominated, and the failure modes are weird. OpenTelemetry’s GenAI semantic conventions are the standard the industry is converging on to handle that, and the rules around where to put prompts are non-obvious enough that most first-attempt instrumentations get it wrong.

Key Takeaways

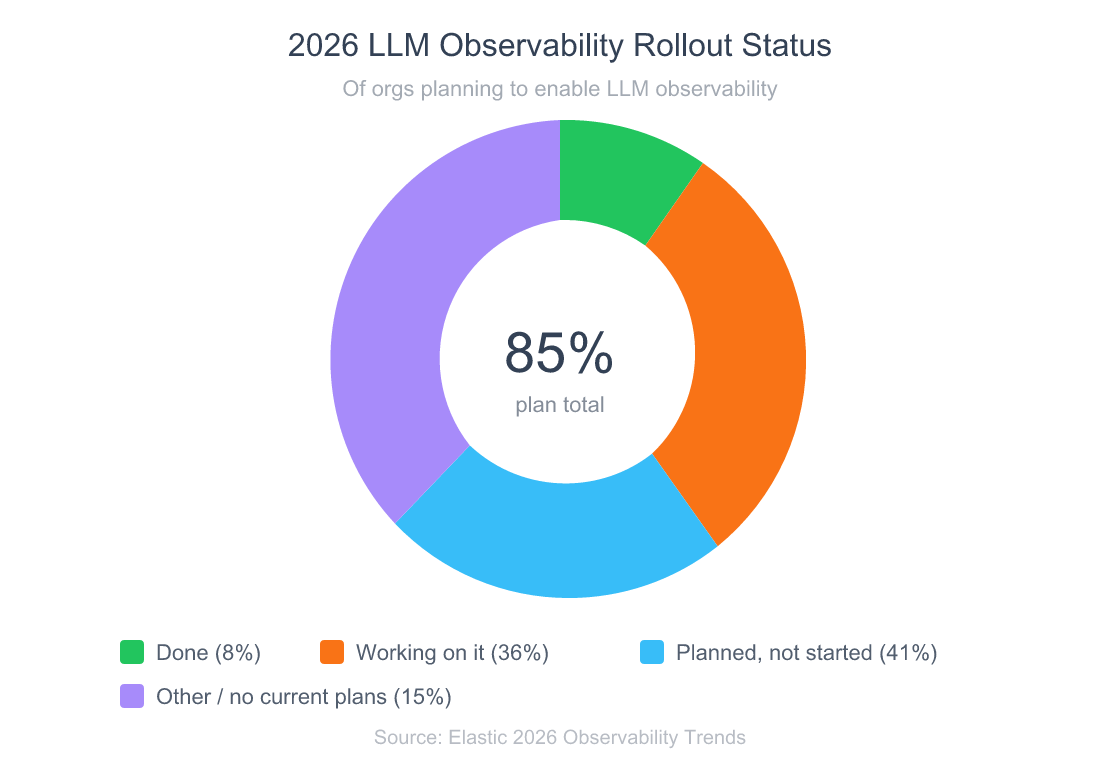

- 85% of organizations plan to enable LLM observability, but only 8% have finished the rollout (Elastic, 2026).

- The OTel GenAI conventions are still in Development status, but Datadog, Honeycomb, and major frameworks already emit native

gen_ai.*spans.- Prompts and completions can live as span attributes (

gen_ai.input.messages,gen_ai.output.messages) or as opt-in events (gen_ai.client.inference.operation.details) — the right choice depends on size, PII, and your retention policy.- Required metrics are

gen_ai.client.token.usageandgen_ai.client.operation.duration, both histograms, with required attributesgen_ai.operation.nameandgen_ai.provider.name.- Redact at the Collector with the redaction or transform processor before the data leaves your perimeter.

For the broader request-flow context behind tracing distributed services, see API security layers including request authenticity and backend hardening.

What Are OpenTelemetry GenAI Semantic Conventions?

The OpenTelemetry GenAI semantic conventions define a shared vocabulary: span names, attribute keys, metric names, and event shapes for tracing generative AI workloads. The spec is currently in Development status, with most attributes still marked experimental as of May 2026 (OpenTelemetry, 2026). That’s the contract every vendor and framework is now mapping to.

Coverage breaks into four areas: client spans for LLM calls and retrieval, agent spans for invocations and workflows, events for prompt and completion bodies, and metrics for token usage and latency. The OpenTelemetry SIG started this work in April 2026, and by early 2026 the conventions cover LLM client spans, agent spans, events, and metrics across the full lifecycle (OpenTelemetry, 2026).

Vendor adoption arrived fast. Datadog shipped native support in OTel SDK/Collector v1.37 on December 1, 2026, automatically mapping gen_ai.request.model, gen_ai.usage.input_tokens, gen_ai.provider.name, and gen_ai.operation.name into its LLM Observability product (Datadog, 2026). Honeycomb followed with general availability of Honeycomb Metrics and AI-focused Agent Skills in March 2026 (Honeycomb, 2026). Jaeger v2 rebuilt its core on the OpenTelemetry Collector and pivoted toward AI agent observability (CNCF, 2026).

What does that mean for you? You can instrument once and switch backends without rewriting traces. That’s the whole point.

Why Does the Convention Matter Right Now?

A 2026 Elastic Observability survey found that 85% of organizations plan to enable LLM observability, but only 8% have finished the rollout, 36% are working on it, and 41% have plans but haven’t started (Elastic, 2026). Standardization windows like this one are short, and first movers set the schema everyone else inherits.

Grafana’s 2026 Observability Survey, with 1,300+ practitioners across 76 countries, tells the same story from a different angle. Just 14% currently use observability for LLM-based production workloads, up from 5% a year earlier. The “not on my radar” cohort dropped from 42% to 29% in twelve months (Grafana Labs, 2026). Demand is accelerating faster than tooling.

Gartner projects LLM observability investments will reach 50% of GenAI deployments by 2028, up from just 15% today (Gartner, 2026). The trajectory is steep, and it’s funded. So the question is not whether to instrument, it’s which schema to instrument against. Picking the OTel GenAI conventions today means your traces still parse in three years.

For why standards-based instrumentation reduces vendor lock-in, see comparison of standardized integrations across providers.

How Do You Structure Spans for an Agent Run?

The spec defines a clean hierarchy: agent invocations contain LLM inference, tool execution, and retrieval as child spans. An invoke_agent span at the top, named "invoke_agent {gen_ai.agent.name}" when the agent name is available, parents the actual work (OpenTelemetry, 2026). Beneath it, each LLM call gets its own span named "{gen_ai.operation.name} {gen_ai.request.model}", each tool call gets "execute_tool {gen_ai.tool.name}", and each retrieval gets "{operation_name} {data_source.id}".

Span kinds matter more than people realize. Inference spans should be CLIENT in most cases but INTERNAL when the model runs in the same process. Tool execution is always INTERNAL. It represents code your application owns. Embeddings and retrieval are CLIENT because they cross a process boundary to a vector store (OpenTelemetry, 2026). Get this wrong and your service maps draw arrows in the wrong direction.

Here is the minimal Python sketch:

from opentelemetry import trace

from opentelemetry.semconv._incubating.attributes import gen_ai_attributes as ga

tracer = trace.get_tracer("my-agent")

with tracer.start_as_current_span(

"invoke_agent customer-support",

kind=trace.SpanKind.INTERNAL,

attributes={

ga.GEN_AI_OPERATION_NAME: "invoke_agent",

ga.GEN_AI_AGENT_NAME: "customer-support",

ga.GEN_AI_AGENT_ID: "agt_8f2a",

ga.GEN_AI_CONVERSATION_ID: conversation_id,

},

):

with tracer.start_as_current_span(

"chat gpt-4o",

kind=trace.SpanKind.CLIENT,

attributes={

ga.GEN_AI_OPERATION_NAME: "chat",

ga.GEN_AI_PROVIDER_NAME: "openai",

ga.GEN_AI_REQUEST_MODEL: "gpt-4o",

},

) as llm_span:

response = openai_client.chat.completions.create(...)

llm_span.set_attribute(ga.GEN_AI_RESPONSE_MODEL, response.model)

llm_span.set_attribute(ga.GEN_AI_USAGE_INPUT_TOKENS, response.usage.prompt_tokens)

llm_span.set_attribute(ga.GEN_AI_USAGE_OUTPUT_TOKENS, response.usage.completion_tokens)

with tracer.start_as_current_span(

"execute_tool lookup_order",

kind=trace.SpanKind.INTERNAL,

attributes={

ga.GEN_AI_OPERATION_NAME: "execute_tool",

ga.GEN_AI_TOOL_NAME: "lookup_order",

},

):

order = lookup_order(order_id)

From the field: When we first added agent instrumentation in production, we forgot to set

kind=CLIENTon the OpenAI call. Datadog then grouped the LLM span as an internal database operation in our service map. Five minutes of head-scratching, two minutes to fix. Span kind is the cheapest piece of metadata to get right and the most expensive to debug when wrong.

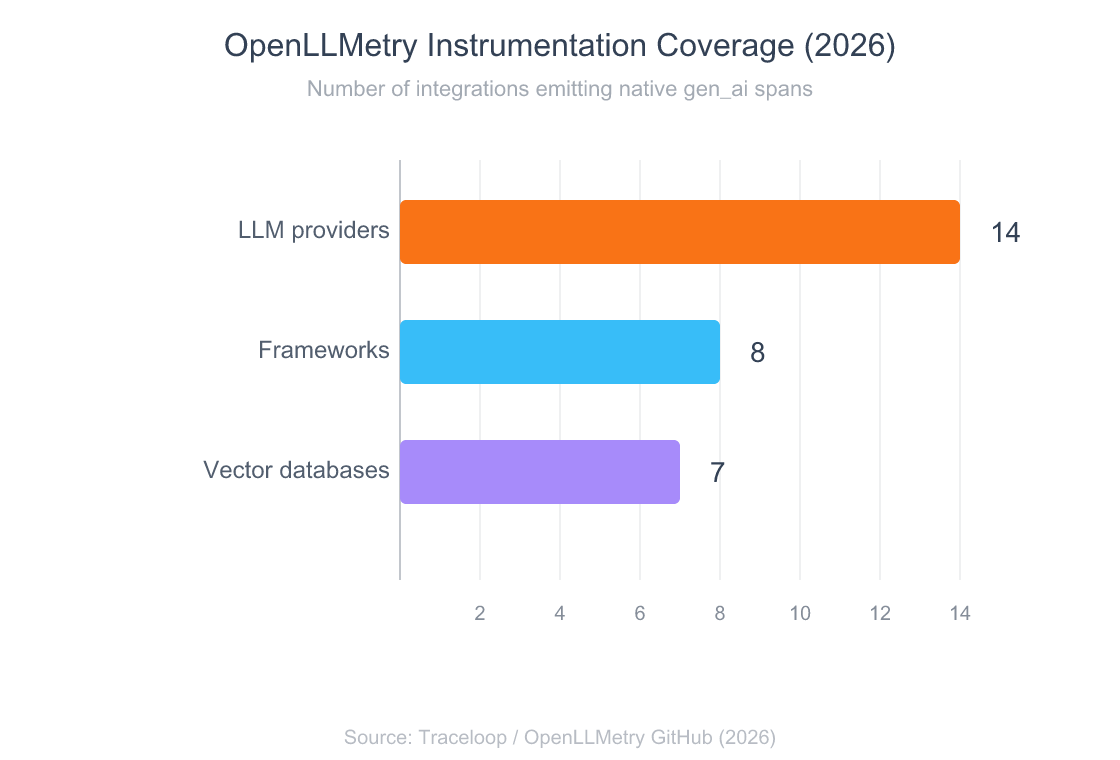

LangSmith now provides end-to-end OpenTelemetry support for LangChain and LangGraph, automatically generating native gen_ai spans without manual wiring (LangChain, 2026). For framework-agnostic auto-instrumentation, the OpenLLMetry project, now maintained as part of the official OpenTelemetry conventions, covers OpenAI, Anthropic, Cohere, Bedrock, Vertex, LangChain, LlamaIndex, LangGraph, CrewAI, and Haystack. It carries Apache 2.0 licensing and 7,100+ GitHub stars as of mid-2026 (Traceloop, 2026).

For deeper context on how agents call tools, see MCP and A2A protocol architecture for tool and inter-agent communication.

Where Do Prompts Belong — Attributes Or Events?

This is the question that catches every team. The spec lets you record prompt and completion content two different ways: as span attributes (gen_ai.input.messages, gen_ai.output.messages, gen_ai.system_instructions) or as the opt-in event gen_ai.client.inference.operation.details, which “could be used to store input and output details independently from traces” (OpenTelemetry, 2026). Both paths are sanctioned. They aren’t equivalent.

Why prefer events for content? Three reasons. First, size: a chat history can be tens of thousands of tokens, and span attributes are the wrong place for blobs that big. Second, cardinality: every unique prompt becomes a unique attribute value, which destroys aggregation in metric backends if you’re not careful. Third, sampling: events can ride a different pipeline than spans, so you can keep 100% of structural traces while sampling content at 5%.

The spec is explicit about the sensitivity: gen_ai.input.messages, gen_ai.output.messages, gen_ai.system_instructions, gen_ai.tool.call.arguments, and gen_ai.tool.call.result all carry warnings that they’re “likely to contain sensitive information including user/PII data” (OpenTelemetry, 2026). Content capture is opt-in by design. Default off, turn on deliberately.

A pattern that works in production: emit the structural span every time, attach token counts and model IDs as attributes, and only attach prompt content when an explicit opt-in flag is set per request. That gives you 100% of the trace shape, the data you need for latency debugging, and only the content you need for evaluation runs.

For the data-modeling instincts behind this trade-off, see database isolation and the cost of writing large blobs to indexed columns.

What Metrics Should You Emit?

The conventions define two required client-side metrics: gen_ai.client.token.usage and gen_ai.client.operation.duration. Both are histograms. Token usage uses the unit {token} with bucket boundaries spanning 1 to 67,108,864: fourteen buckets covering eight orders of magnitude, designed for a world where individual requests range from a one-token completion to multi-megabyte context windows (OpenTelemetry, 2026).

Required attributes on both metrics are gen_ai.operation.name, gen_ai.provider.name, and (for token usage) gen_ai.token.type with values "input" or "output". gen_ai.request.model is conditionally required when available. That set is enough to answer questions like “how much did we spend on Claude vs GPT this month” or “what’s our p99 chat latency on gpt-4o” without bolting on custom tags.

For streaming workloads, three more histograms are defined: gen_ai.client.operation.time_to_first_chunk, gen_ai.client.operation.time_per_output_chunk, and the server-side gen_ai.server.time_to_first_token and gen_ai.server.time_per_output_token. The TTFT histogram uses fine-grained buckets starting at 0.001s. Milliseconds matter when you’re optimizing the prefill phase of a self-hosted model.

Don’t sleep on these. Token cost and TTFT are the two metrics product managers ask about most, and they’re both standardized. If your dashboard says “tokens consumed” and your CFO’s dashboard says “OpenAI spend,” and they don’t match within 1%, the gap is almost always missing or misnamed gen_ai.usage.* attributes.

For the engineering economics behind why you measure latency at all, see reliability patterns where retries and timeouts shape your latency tail.

How Do You Redact PII Without Breaking Traces?

Defense in depth: redact at three layers, and assume each one will fail at least once. First, never capture content you don’t need — set OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT=false at the SDK level. Second, configure the OpenTelemetry Collector with the redaction and transform processors to scrub anything that does leak through. Third, lock down access to your trace backend so that even if redaction misses something, the blast radius is small.

The redaction processor in the Collector deletes span, log, and metric attributes that don’t match an allowlist — flip the model from “block sensitive things” to “permit known-safe things.” The transform processor with OTTL is what you reach for when you need pattern-based rewriting, like masking everything matching an email or credit-card regex (OpenTelemetry, 2026). Both run in the Collector, before data leaves your perimeter.

processors:

redaction:

allow_all_keys: false

allowed_keys:

- gen_ai.system

- gen_ai.provider.name

- gen_ai.operation.name

- gen_ai.request.model

- gen_ai.response.model

- gen_ai.usage.input_tokens

- gen_ai.usage.output_tokens

- gen_ai.token.type

- gen_ai.agent.name

- gen_ai.tool.name

blocked_key_patterns:

- .*\.message\..*

- .*\.system_instructions

transform/scrub-events:

error_mode: ignore

log_statements:

- context: span

statements:

- replace_pattern(attributes["gen_ai.input.messages"], "[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\\.[A-Z|a-z]{2,}", "<EMAIL>")

- replace_pattern(attributes["gen_ai.output.messages"], "\\b\\d{3}-\\d{2}-\\d{4}\\b", "<SSN>")

Hard-won finding: redaction at the Collector is the only layer that’s framework-agnostic. If you redact in the LangChain callback handler, a future LlamaIndex migration silently breaks your scrubber. If you redact in the SDK, an SDK upgrade can quietly change attribute keys. Collector-level OTTL outlives both, because it operates on the OTLP wire format directly.

Honeycomb’s documentation makes the same call: “filter out sensitive data as early as possible in the observability pipeline, ideally at the instrumentation layer,” and use the Collector as the second line of defense (Honeycomb, 2026). Both layers are necessary because no single one is reliable on its own.

For broader patterns on protecting requests in flight, see defense-in-depth for APIs including authentication and request validation.

Which Frameworks Already Emit Native gen_ai Spans?

Coverage is wider than most teams realize. The OpenLLMetry project, now folded into the official OpenTelemetry conventions, instruments three categories of integrations (Traceloop, 2026):

- 14 LLM providers: OpenAI, Anthropic, Cohere, Google Generative AI, Groq, Bedrock, HuggingFace, IBM Watsonx, Mistral, Ollama, Replicate, SageMaker, Together AI, Vertex

- 7 vector databases: Chroma, LanceDB, Marqo, Milvus, Pinecone, Qdrant, Weaviate

- 8 frameworks: LangChain, LlamaIndex, LangGraph, Haystack, CrewAI, Langflow, LiteLLM, OpenAI Agents

LangChain’s callback system has been the de facto OTel hook for two years, but the bigger move is LangSmith adding native end-to-end OpenTelemetry support directly into the SDK. LangChain instrumentation now generates detailed gen_ai traces automatically, transports them through OTel’s standardized format, and ingests them in any OTLP-compatible backend (LangChain, 2026). It’s the same shape Datadog ingests, the same shape Honeycomb ingests.

Watch this talk for a closer look at agent observability under the OTel conventions. Guangya Liu (IBM) and Karthik Kalyanaraman (Langtrace AI) cover span hierarchy, framework integrations, and the practical questions that come up at scale:

The contributor list for OpenTelemetry’s GenAI work reads like an industry roll call: Amazon, Elastic, Google, IBM, Microsoft, Langtrace, OpenLIT, Scorecard, and Traceloop are all upstream (OpenTelemetry, 2026). When this many vendors collaborate on a schema before it’s finalized, picking it is the safest engineering bet on the table.

Frequently Asked Questions

Are the OpenTelemetry GenAI conventions production-ready in 2026?

The conventions are still in Development status, with most attributes marked experimental (OpenTelemetry, 2026). That said, Datadog supports them in v1.37+ and 14% of Grafana survey respondents already use LLM observability in production (Grafana Labs, 2026). The schema may still shift on the margins; the core attribute names won’t.

Should I capture prompts as attributes or as events?

Prefer events for content. The opt-in gen_ai.client.inference.operation.details event lets prompts ride a separate pipeline from spans, which simplifies sampling and PII redaction (OpenTelemetry, 2026). Use attributes only for short structural fields like model name, token counts, and finish reason. Default content capture to off and turn it on per request when you actually need it for evals.

Which observability vendors support OTel GenAI semantic conventions today?

Datadog ships native support in OTel SDK/Collector v1.37+ (Datadog, 2026). Honeycomb added AI-specific instrumentation alongside Honeycomb Metrics GA in March 2026 (Honeycomb, 2026). Jaeger v2 rebuilt on the OTel Collector and pivoted toward AI agent observability (CNCF, 2026). New Relic, Grafana, and OpenObserve also map the gen_ai.* namespace.

What’s the difference between invoke_agent and invoke_workflow spans?

invoke_agent represents a single agent’s execution, named "invoke_agent {gen_ai.agent.name}" and either CLIENT or INTERNAL depending on whether the agent runs remotely. invoke_workflow represents “an operation that executes a coordinated process composed of multiple agents” — the orchestration layer above several agent invocations (OpenTelemetry, 2026). One agent equals one invoke_agent. Multi-agent system equals one invoke_workflow parenting several invoke_agent spans.

How do I prevent prompts from leaking into a third-party trace backend?

Three layers: keep OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT off by default, run a Collector with the redaction processor in allowlist mode, and add a transform processor with OTTL regexes for emails, SSNs, and other PII patterns (OpenTelemetry, 2026). Redact at the Collector — not in the SDK — so the rule survives framework changes. Then audit access to the backend regularly.

Closing Thought

OpenTelemetry GenAI is the schema your tools already speak. Datadog, Honeycomb, Jaeger v2, LangSmith, OpenLLMetry — they all map to the same gen_ai.* namespace, and the gap between “we should instrument this” and “it works in production” is smaller than it has been at any point since LLMs became a serious production concern. Standards-finalization windows are short, and this one is open right now.

If you take one thing away: default content capture to off, emit structural spans always, and put redaction at the Collector. Everything else is a tuning detail. The agent that runs three tool calls and one LLM completion to answer a customer question should produce a trace you can read at a glance — and zero prompt content you did not deliberately decide to keep.

For the architectural primer that pairs naturally with this guide, see tiered memory architectures for AI agents and how they show up in span hierarchy.