What Are ACID Properties and Why Do They Matter for Every Database?

Every backend developer writes SQL. Fewer can explain what actually happens between BEGIN and COMMIT. That gap isn’t academic — it’s the difference between a system that survives a crash and one that silently corrupts your data. 67.7% of businesses experienced significant data loss in the past year (Data Stack Hub, 2026). Most of those incidents trace back to misunderstood fundamentals, not exotic edge cases.

This guide covers the four concepts you need to understand before touching a production database: ACID properties, transaction mechanics, isolation levels, and write-ahead logging. No hand-waving. Concrete examples in PostgreSQL, real benchmark numbers, and the failure modes nobody warns you about.

API security layers

TL;DR: ACID (Atomicity, Consistency, Isolation, Durability) guarantees that database transactions either fully succeed or fully fail, keeping data correct even during crashes. Write-ahead logging makes this possible by recording changes before applying them. According to Splunk and Oxford Economics (2026), Global 2000 companies lose $400 billion annually to downtime — and most of it starts with a misunderstood transaction.

What Does ACID Actually Mean?

Global 2000 companies lose $400 billion annually to downtime — roughly 9% of profits and $200 million per company on average (Splunk/Oxford Economics, 2026). ACID is the set of guarantees that prevents your database from contributing to that number — a framework Theo Härder and Andreas Reuter formalized in 1983 (Härder & Reuter, ACM Computing Surveys, 1983). Every relational database implements these four properties, and understanding them changes how you write every query.

Atomicity means all-or-nothing. A transaction either completes entirely or rolls back entirely. Transfer $500 between two accounts? Both the debit and credit happen, or neither does. There’s no state where the money vanishes.

Consistency means the database moves from one valid state to another. If you have a constraint that account balances can’t go negative, no transaction can violate it — even if the app code tries.

Isolation means concurrent transactions don’t interfere with each other. Two people buying the last concert ticket at the same time won’t both succeed. The database serializes conflicting operations even when they run in parallel.

Durability means committed data survives crashes. Once you get a success response, the data is on disk. Power failure, kernel panic, hardware fault — it doesn’t matter. The commit is permanent.

Here’s what that looks like in practice:

BEGIN;

UPDATE accounts SET balance = balance - 500 WHERE id = 1;

UPDATE accounts SET balance = balance + 500 WHERE id = 2;

-- If anything fails above, both changes are rolled back.

-- If both succeed, COMMIT makes them permanent and crash-safe.

COMMIT;

Without atomicity, a crash between those two UPDATE statements loses $500. Without durability, the COMMIT response lies to your application. Without isolation, a concurrent read might see the intermediate state where account 1 lost money but account 2 hasn’t received it yet. These aren’t hypothetical scenarios — they’re exactly what happens when developers use autocommit without thinking.

Key insight: ACID isn’t four independent features — it’s a system where each property depends on the others. Atomicity without durability is pointless (you roll back correctly but lose committed data). Isolation without consistency lets concurrent transactions corrupt state together instead of separately. This is why partial ACID implementations always fail in production.

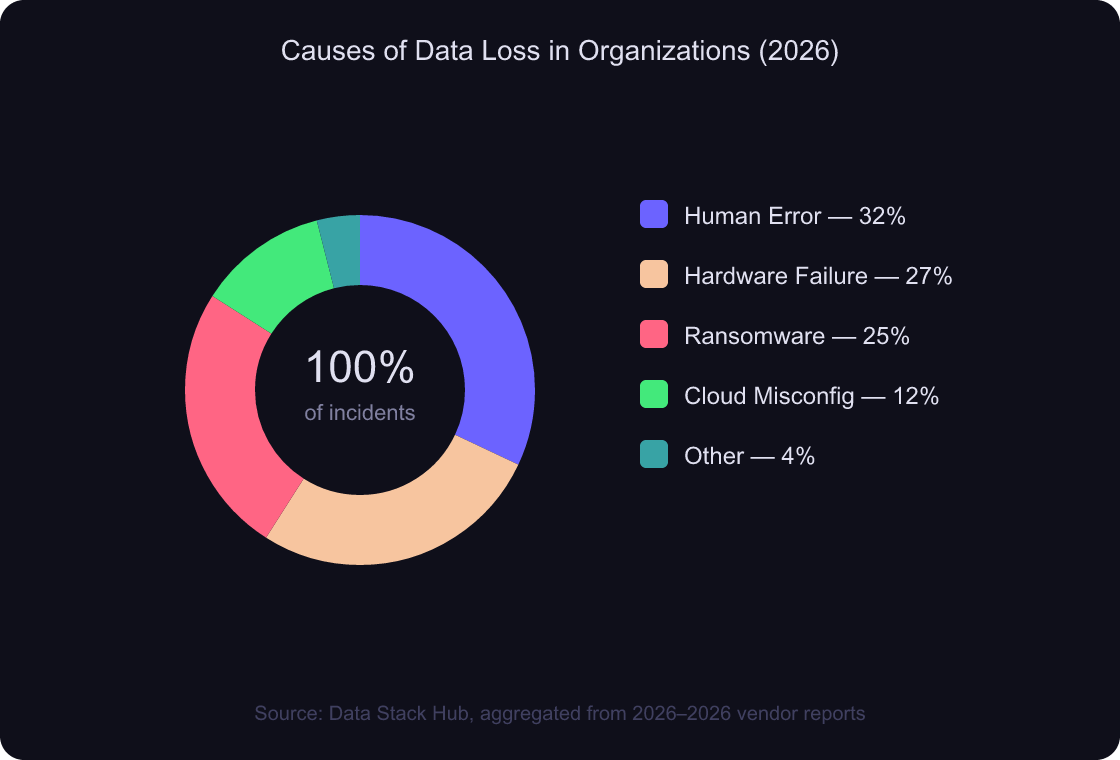

According to Data Stack Hub (2026), human error causes 32% of data loss incidents, hardware failure causes 27%, and ransomware accounts for 25%. ACID properties can’t prevent human error in application logic, but they guarantee the database itself never contributes to the problem.

Source: Data Stack Hub, aggregated from Infrascale, CrashPlan, and Veeam data (2026–2026)

database indexing strategies

How Do Database Transactions Work Under the Hood?

Organizations experience an average of 86 outages per year, and 55% face disruptions at least weekly (CockroachDB State of Resilience 2026, 2026). Transactions are the mechanism that keeps your data consistent through every one of those disruptions. Here’s what actually happens when you run one.

A transaction has three possible outcomes: commit, rollback, or abort. That’s it. There’s no “partially committed” state. The database maintains this guarantee using a transaction log (more on that in the WAL section) and an internal state machine.

BEGIN;

-- Step 1: Read current inventory

SELECT quantity FROM products WHERE id = 42 FOR UPDATE;

-- Returns: quantity = 1

-- Step 2: Verify business logic in your application

-- (only proceed if quantity > 0)

-- Step 3: Decrement inventory

UPDATE products SET quantity = quantity - 1 WHERE id = 42;

-- Step 4: Create the order

INSERT INTO orders (product_id, user_id, created_at)

VALUES (42, 7, NOW());

COMMIT;

The FOR UPDATE clause in step 1 is doing heavy lifting here. It acquires a row-level lock on that product row, preventing any other transaction from modifying it until this transaction completes. Without it, two concurrent buyers could both read quantity = 1, both decrement, and end up with quantity = -1. That’s a classic race condition that isolation alone doesn’t always prevent — you need explicit locking for inventory-type operations.

What happens during a crash? If the server dies between the UPDATE and the INSERT, the entire transaction rolls back on recovery. The inventory stays at 1. The order never exists. No inconsistency. That’s atomicity in action.

Practical tip: Wrap your transactions as tightly as possible. I’ve debugged production deadlocks caused by transactions holding locks during HTTP calls to external services. The service times out, the lock holds for 30 seconds, and every query on that row queues behind it. Keep transactions short: read, compute, write, commit.

What about SAVEPOINT? PostgreSQL supports nested checkpoints within a transaction:

BEGIN;

INSERT INTO orders (product_id, user_id) VALUES (42, 7);

SAVEPOINT before_payment;

UPDATE wallets SET balance = balance - 99.99 WHERE user_id = 7;

-- If payment logic fails:

ROLLBACK TO before_payment;

-- The order INSERT still exists. Only the wallet UPDATE is undone.

COMMIT;

Savepoints let you handle partial failures without aborting the whole transaction. They’re useful for batch operations where you want to skip failed rows without losing the entire batch.

PostgreSQL performance tuning

Why Do Isolation Levels Exist?

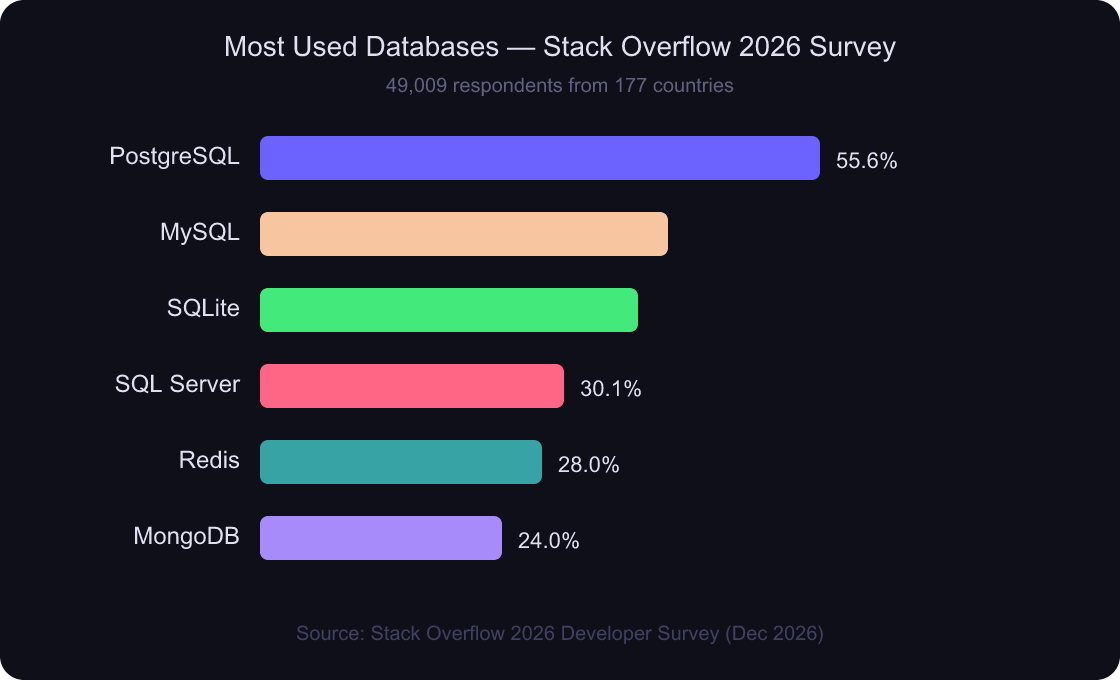

PostgreSQL tops the 2026 Stack Overflow Developer Survey at 55.6% usage across 49,009 respondents from 177 countries (Stack Overflow, 2026). It’s the most popular database precisely because it handles concurrency well — and isolation levels are how it does it.

Isolation levels exist because there’s a fundamental tradeoff between correctness and performance. Stricter isolation means fewer concurrency bugs but more lock contention. Looser isolation means higher throughput but the possibility of reading stale or inconsistent data. You’re choosing where on that spectrum your application sits.

The SQL standard defines four levels. Here’s what each one allows:

| Isolation Level | Dirty Reads | Non-Repeatable Reads | Phantom Reads |

|—|—|—|—|

| Read Uncommitted | Possible | Possible | Possible |

| Read Committed | Prevented | Possible | Possible |

| Repeatable Read | Prevented | Prevented | Possible* |

| Serializable | Prevented | Prevented | Prevented |

*PostgreSQL’s Repeatable Read implementation actually prevents phantom reads too, because it uses MVCC snapshots rather than lock-based isolation.

What do these anomalies look like? Here’s a dirty read — the most dangerous one:

-- Transaction A -- Transaction B

BEGIN;

UPDATE users SET role = 'admin'

WHERE id = 5;

BEGIN;

-- With READ UNCOMMITTED, this sees

-- role = 'admin' even though A

-- hasn't committed yet.

SELECT role FROM users WHERE id = 5;

ROLLBACK;

-- Now Transaction B acted on data

-- that never actually existed.

COMMIT;

PostgreSQL doesn’t even implement Read Uncommitted — if you set it, you get Read Committed instead. That’s a deliberate design choice. The PostgreSQL team decided dirty reads are never an acceptable tradeoff. Can you blame them?

Why PostgreSQL’s approach is different: Most databases implement isolation with locks. PostgreSQL uses MVCC instead — every transaction sees a snapshot from when it started. Readers never block writers. Writers never block readers. This is why PostgreSQL handles mixed read/write workloads better than lock-based systems.

The default isolation level in PostgreSQL (and MySQL) is Read Committed. For most web applications, that’s the right choice. You won’t see uncommitted data, and the performance is good. Move to Repeatable Read or Serializable only when your application logic requires it — financial calculations, inventory management, or anywhere a read-then-write pattern must see consistent data.

Source: Stack Overflow 2026 Developer Survey, 49,009 respondents

database connection pooling

What Is Write-Ahead Logging and Why Does Every Database Use It?

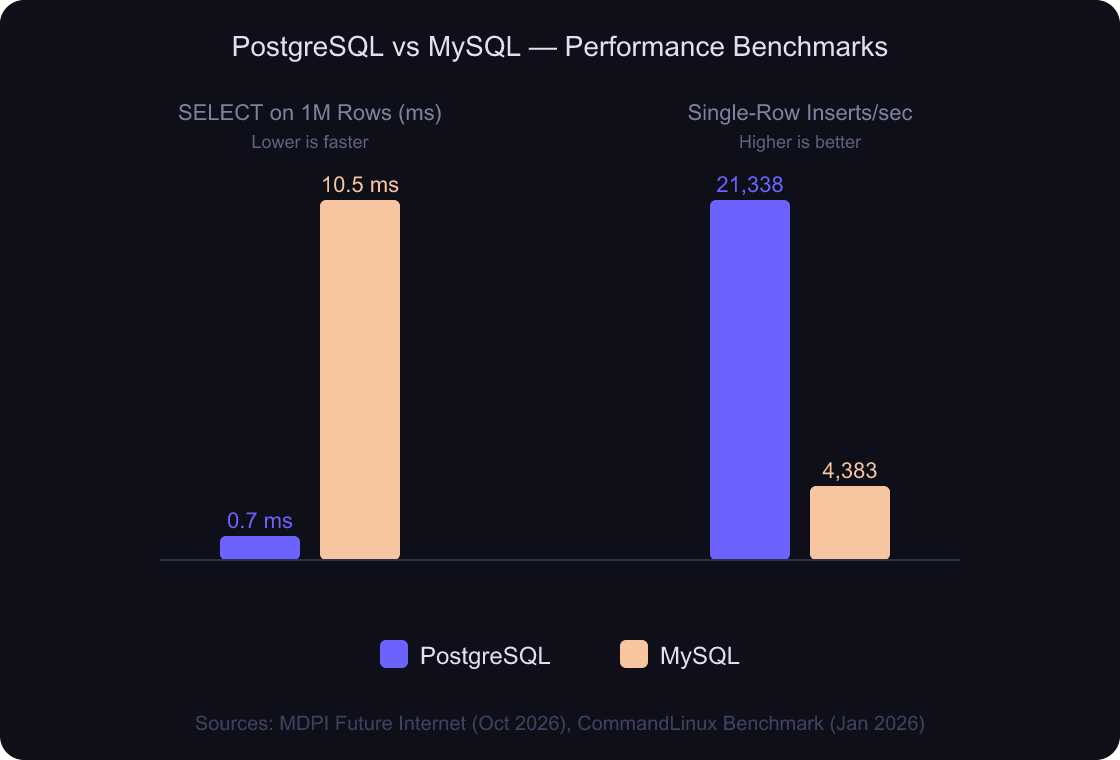

PostgreSQL handled 21,338 single-row inserts per second versus MySQL’s 4,383 — a 4.87x throughput gap largely driven by WAL design (CommandLinux Benchmark, 2026). WAL works by flushing a single sequential log file instead of scattering writes across every modified data page (PostgreSQL Documentation v18). Without it, every COMMIT would require dozens of random disk seeks.

Here’s the core idea. When you modify data, the database doesn’t immediately update the actual data files (called “heap” files). Instead, it writes a description of the change to a sequential log file first — the write-ahead log. Only after the WAL record is safely on disk does the transaction count as committed.

Why is sequential I/O so much faster? A spinning disk can write ~200 MB/s sequentially but only ~2 MB/s randomly (that’s a 100x difference). SSDs narrow the gap, but sequential writes are still 3-5x faster even on NVMe. WAL exploits this by converting random writes into sequential ones.

The process works like this:

- Your

UPDATEmodifies the row in shared memory (the buffer cache) - A WAL record describing the change is written to the WAL buffer

- At

COMMIT, the WAL buffer is flushed to disk (fsync) - You get your success response — the data is durable

- Later, a background process (“checkpointer”) writes the dirty pages from memory to the actual data files

Step 5 happens lazily. That’s the key insight — the data files can be out of date, because the WAL has everything needed to reconstruct the current state. If the server crashes between steps 4 and 5, PostgreSQL replays the WAL on startup and recovers without data loss.

Benchmark context: PostgreSQL completed SELECT queries on 1 million records in 0.6–0.8 ms versus MySQL’s 9–12 ms — roughly 13x faster — in a peer-reviewed benchmark using identical hardware (Salunke & Ouda, MDPI Future Internet, 2026). PostgreSQL’s MVCC and WAL implementation contributes directly to this read performance advantage, since readers never wait for WAL flushes.

PostgreSQL 18 takes this further with asynchronous I/O, delivering 2–3x improvement in sequential scan read performance on cold caches (Aiven, 2026). Instead of reading one page at a time and blocking, the database now issues multiple read requests in parallel and processes them as they complete. For analytics queries scanning large tables, that cuts cold-cache latency in half or more.

Sources: Salunke & Ouda, MDPI Future Internet (2026); CommandLinux SysBench Benchmark (2026)

WAL also enables replication. Streaming replication in PostgreSQL works by shipping WAL records to a standby server, which replays them in order. That’s how read replicas stay in sync — they’re essentially running continuous crash recovery against a stream of changes from the primary.

database backup strategies

What Happens When ACID Guarantees Break?

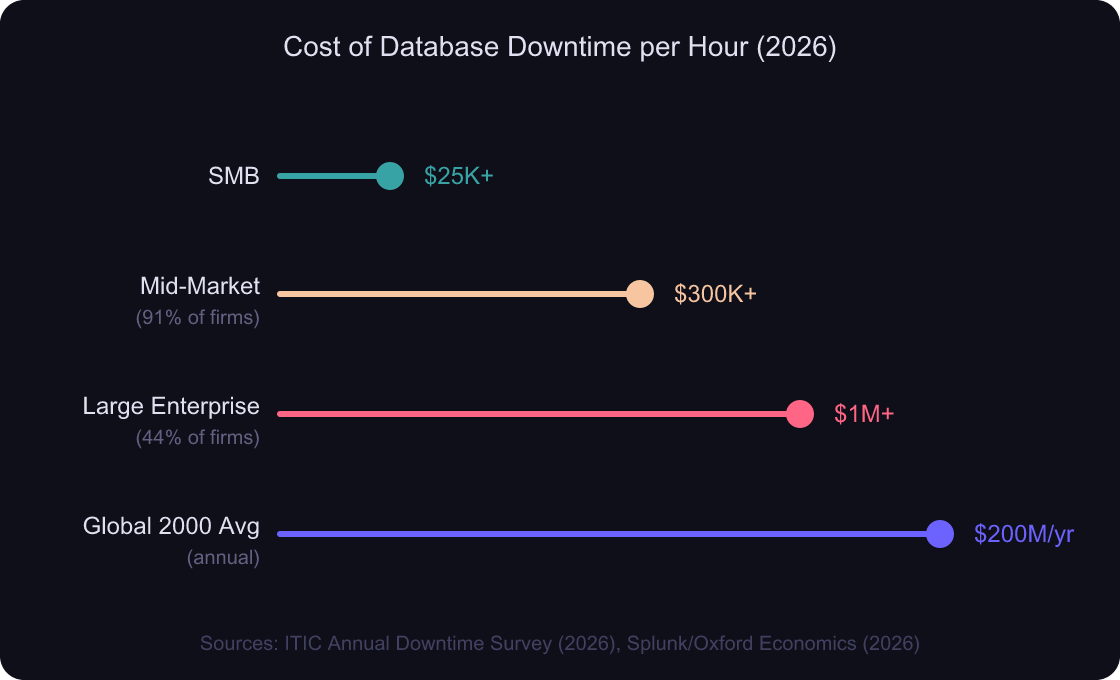

91% of mid-to-large enterprises report that a single hour of downtime costs over $300,000, and 44% say it exceeds $1 million (ITIC, 2026). When ACID guarantees fail, the cost isn’t just the downtime itself — it’s the data corruption that lingers after the system comes back up.

Here are the real failure modes:

Torn writes happen when a crash interrupts a page write. Half the page has new data, half has old data. Without WAL, that page is permanently corrupted. With WAL, PostgreSQL detects the torn page during recovery and rebuilds it from the log. This is why full_page_writes is enabled by default — it’s your safety net.

Lost updates happen when two transactions read the same row, compute new values independently, and write back — the second write overwrites the first. This is an isolation failure, not a crash failure. The fix is either SELECT ... FOR UPDATE (pessimistic locking) or using a higher isolation level like Repeatable Read with retry logic.

Phantom reads happen when a transaction re-runs a range query and gets different rows because another transaction inserted data in the range. In financial reporting, this means your totals don’t add up. PostgreSQL’s Repeatable Read prevents this, but Read Committed doesn’t.

How many teams actually test their recovery? Only 20% of executives feel fully prepared for outages, despite organizations averaging 86 disruptions per year (CockroachDB, 2026). The fix isn’t buying more infrastructure. It’s understanding which ACID guarantee your application depends on, configuring the right isolation level, and testing your recovery path before production forces you to.

The real risk: According to ITIC (2026), 44% of enterprises say a single hour of downtime exceeds $1 million — yet most teams never simulate a crash recovery. Torn writes, lost updates, and phantom reads aren’t edge cases. They’re the default behavior when you misconfigure isolation or skip WAL checkpoints.

Sources: ITIC Annual Hourly Cost of Downtime Survey (2026), Splunk/Oxford Economics “Hidden Costs of Downtime” (2026)

monitoring and observability for databases

Frequently Asked Questions

What’s the difference between optimistic and pessimistic locking?

Pessimistic locking acquires locks upfront (SELECT ... FOR UPDATE) and blocks other transactions from modifying the same rows. Optimistic locking doesn’t lock anything — it checks at commit time whether the data changed, and retries if it did. According to CockroachDB’s resilience report (2026), 55% of organizations experience weekly disruptions, making retry-friendly optimistic patterns increasingly common in distributed systems.

Does MongoDB support ACID transactions?

Yes, since version 4.0 (2018) for replica sets and 4.2 for sharded clusters. MongoDB supports multi-document ACID transactions with Read Committed and Snapshot isolation. However, the performance overhead is significant — MongoDB’s documentation recommends designing schemas to minimize multi-document transactions, which is the opposite of the relational approach.

SQL vs NoSQL database selection

Which isolation level should I use by default?

Read Committed is the right default for most web applications — and it’s the default in PostgreSQL, the database used by 55.6% of developers (Stack Overflow, 2026). It prevents dirty reads while maintaining strong throughput. Only move to Repeatable Read or Serializable when your business logic requires consistent reads within a single transaction, such as financial reporting or inventory management.

How much disk space does WAL consume?

PostgreSQL keeps enough WAL segments to recover from the last checkpoint, plus any segments needed by replication slots. The default max_wal_size is 1 GB — PostgreSQL triggers a checkpoint when WAL hits this threshold. In a January 2026 benchmark, PostgreSQL achieved 21,338 single-row inserts per second with WAL enabled (CommandLinux, 2026). Busy production databases generate 10–100 GB of WAL daily, but most gets recycled. Monitor with pg_stat_wal in PostgreSQL 14+.

Can you have ACID compliance in distributed databases?

Yes, but with tradeoffs. The CAP theorem states you can’t have strong consistency, availability, and partition tolerance simultaneously. Databases like CockroachDB and Google Spanner achieve distributed ACID using consensus protocols (Raft, Paxos) at the cost of higher write latency. The cloud database market is expected to reach $120.22 billion by 2034, up from $24.17 billion in 2026 (Fortune Business Insights, 2026), largely driven by demand for distributed ACID guarantees.

Conclusion

Database fundamentals aren’t optional knowledge for backend developers. They’re the foundation everything else sits on — and the first thing that breaks when you don’t understand them.

Here’s what to take away:

- ACID guarantees your data stays correct through crashes, concurrency, and hardware failures

- Transactions are all-or-nothing units of work — keep them short and avoid holding locks across network calls

- Isolation levels are a tradeoff dial between correctness and performance — Read Committed is the right default, go stricter only when the business logic demands it

- WAL converts expensive random writes into cheap sequential ones and enables crash recovery, replication, and point-in-time restore

Your next step: open a psql shell and run SHOW default_transaction_isolation;. Know what your application is actually running at. Then check SHOW wal_level;. If you’re running a production database and can’t explain what those two settings do, this article just paid for itself.

PostgreSQL production checklist

Which International Payment Gateway Should Developers Choose in 2026?

How Do You Write an Article? A 7-Step Guide Backed by Data From 912 Million Posts

How Do You Secure an API? The 4-Layer Framework That Actually Works