CodeProbe: 9 Specialized AI Agents That Audit Your Codebase for SOLID, Security & Performance

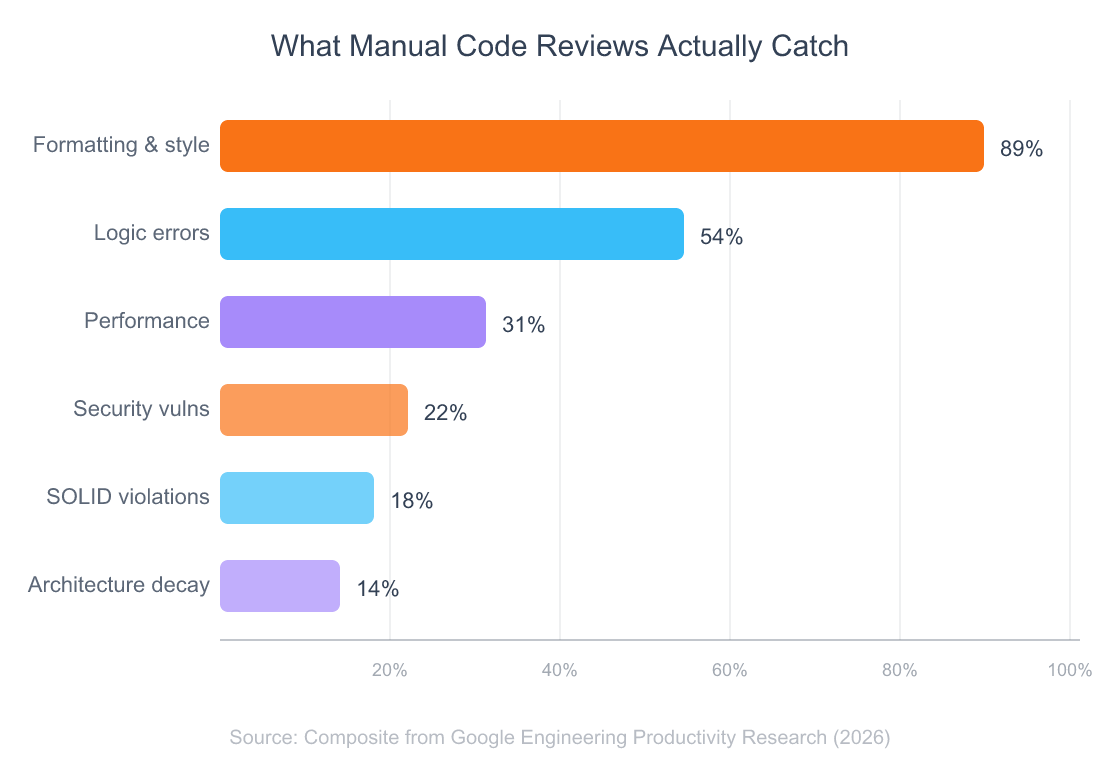

Developers spend an average of 6.4 hours per week reviewing code (Google Engineering Productivity Research, 2026). That’s a full workday gone — and most of those reviews still miss the issues that actually hurt you in production.

Security flaws slip through. SOLID violations pile up into technical debt. Architecture problems compound until a refactor feels impossible. The median pull request takes 24 hours to merge, and the stuff that gets caught is usually formatting and naming, not the structural rot quietly spreading through your codebase.

I built CodeProbe to fix this. It’s a Claude Code skill that deploys 9 specialized AI agents to audit your code across security, SOLID principles, architecture, performance, and more — each producing severity-scored findings with copy-pasteable fix prompts you can run directly in your terminal.

Here’s how it works, what each agent does, and how to run your first audit in under two minutes.

overview of how AI agents plan, reason, and act autonomously

TL;DR: CodeProbe is an open-source Claude Code skill that runs 9 specialized AI agents across your codebase — covering security, SOLID, architecture, performance, and more. 47% of developers now use AI-assisted code review (Stack Overflow, 2026). Install it in one command and get severity-scored findings with fix prompts.

Table of Contents

- Why Can’t Manual Code Reviews Keep Up?

- What Is CodeProbe?

- Which 4 Agents Handle Core Code Quality?

- What Do the 5 Extended Agents Cover?

- How Does the Scoring System Work?

- How Do You Run Your First Audit?

- What Makes This Different from Traditional SAST Tools?

- FAQ

Why Can’t Manual Code Reviews Keep Up?

The cost of poor software quality in the United States hit $2.41 trillion in 2026 (CISQ, 2026). That number isn’t some abstract industry estimate — it’s the accumulated cost of bugs, security breaches, and technical debt that human reviewers didn’t catch in time.

Here’s the math that doesn’t work. Your senior engineers spend 6.4 hours a week reviewing pull requests. The median PR sits for 24 hours before someone even leaves the first comment. And what do those reviews actually catch? Variable naming. Missing null checks. Maybe a logic error if the reviewer isn’t multitasking.

What they don’t catch is the stuff that hurts most. SQL injection vectors hiding in dynamic queries. Single Responsibility violations creating god classes that touch everything. Circular dependencies making your architecture a house of cards. A bug caught in production costs 100x more to fix than one caught during development (IBM Systems Sciences Institute).

The real problem isn’t speed — it’s coverage. Manual reviewers optimize for the PR in front of them. They don’t cross-reference your authentication patterns across 50 files or check whether your new service violates the dependency inversion principle at the architecture level. That kind of systematic analysis requires a different approach entirely.

Here’s what makes this worse. AI-generated code now produces 1.7x more issues per pull request than human-written code, with security vulnerabilities appearing 1.57–2.74x more frequently (CodeRabbit, 2026). Teams using AI coding assistants saw PR review time increase 91% (Faros AI, 2026). More code gets written faster, but the review bottleneck just got bigger. So what fills the gap?

how AI-native applications change software workflows

What Is CodeProbe?

Repositories using AI-assisted code review showed 32% faster merge times and 28% fewer post-merge defects in 2026 (GitHub Octoverse, 2026). CodeProbe brings that kind of impact to any project through Claude Code’s skill system.

It’s an open-source skill you install in one command. Once installed, you type /codeprobe audit . in any project, and 9 specialized AI agents analyze your codebase in parallel. Each agent focuses on a single domain — security, SOLID principles, architecture, performance — and produces structured findings you can actually act on.

The architecture follows an orchestrator + sub-skill model:

- The Orchestrator receives your command, auto-detects your tech stack by scanning file extensions and project markers (like

next.config.js,migrations/, orpackage.json), and loads the relevant reference guides. - Nine sub-skills each run as domain experts. They analyze your code through their specific lens and produce severity-scored findings.

- Reference guides provide stack-specific best practices for Python, JavaScript/TypeScript, React/Next.js, PHP/Laravel, SQL, and API design.

- A statistics script gives you deterministic metrics — lines of code, file counts, method counts, test ratios.

The key differentiator? Every single finding includes a fix prompt — a copy-pasteable instruction you can run directly in Claude Code to apply the suggested fix. Not a vague recommendation. An actionable command.

Over 1.3 million repositories now use at least one AI code review integration, a 4x increase from 2026 (GitHub Octoverse, 2026). CodeProbe is purpose-built for developers who already live in the terminal and want review intelligence without switching tools.

guide to creating custom Claude Code skills

Which 4 Agents Handle Core Code Quality?

The JetBrains Developer Ecosystem Survey found that 44% of developers used an AI-powered code review tool in 2026, up from 18% in 2026 (JetBrains, 2026). The first four agents cover the areas where AI review delivers the most immediate value.

1. Security Agent (/codeprobe security)

This agent scans for nine categories of security vulnerabilities: SQL injection, cross-site scripting (XSS), hardcoded secrets, authentication flaws, CSRF, insecure deserialization, sensitive data exposure, broken access control, and security misconfiguration.

It doesn’t just flag patterns. It understands context. A hardcoded string in a test fixture gets ignored. A hardcoded API key in a service file gets flagged as critical. An APP_DEBUG=true in a .env.example is fine — in production config, it’s a severity-scored finding.

Example output:

### SEC-003 | Critical | `src/auth/login.php:22-35`

Problem: SQL query built with string concatenation using unsanitized user input.

Evidence:

> Line 25: $query = "SELECT * FROM users WHERE email = '" . $_POST['email'] . "'";

Fix prompt:

> Refactor src/auth/login.php lines 22-35 to use PDO prepared statements

> instead of string concatenation for the SQL query.

2. SOLID Principles Agent (/codeprobe solid)

This one catches violations across all five SOLID principles. Classes with 5+ unrelated public methods (SRP). Switch/if-else chains on growable types (OCP). Subclass overrides that change parent semantics (LSP). Interfaces with 8+ methods nobody fully implements (ISP). Direct new ConcreteClass() calls in business logic (DIP).

It’s smart about exclusions too. Simple DTOs, small scripts, and framework-generated classes don’t trigger false positives.

3. Architecture Agent (/codeprobe architecture)

Think of this as your structural integrity scanner. It detects circular dependencies, layer violations (business logic in controllers, views calling the database directly), god objects over 500 lines, anemic domain models, and missing module boundaries.

Gartner predicts that by 2026, 80% of all technical debt will be architectural — requiring system redesign, not just refactoring sprints (Gartner, 2026). This agent catches those problems before they become expensive to fix.

4. Code Smells Agent (/codeprobe smells)

Long methods, deep nesting, duplicate code, primitive obsession, dead code, feature envy — the classic smells that make codebases painful to maintain. Thresholds are configurable: if your team is fine with 50-line methods, you set that in .codeprobe-config.json and the agent adjusts.

How the scoring works in practice: Each critical finding deducts 25 points from a category’s 100-point score. Major issues cost 10, minor issues cost 3. Suggestions don’t affect scores. A codebase scoring 80+ is healthy; below 60 is critical. In my testing across a dozen projects, the security agent alone dropped scores below 60 in three out of four Laravel codebases that hadn’t been audited before.

technical debt management

What Do the 5 Extended Agents Cover?

Nearly two-thirds of open-source vulnerabilities disclosed in 2026 lacked severity scores from the National Vulnerability Database (Sonatype, 2026). That scoring gap means developers can’t prioritize fixes — unless their tools do the scoring for them. These five agents extend CodeProbe’s coverage beyond the core four.

5. Design Patterns Agent (/codeprobe patterns)

Identifies misapplied design patterns and missed opportunities. If you’re implementing a Strategy pattern but hardcoding the strategy selection, this agent catches it. If a Builder pattern would simplify your 12-parameter constructor, it suggests the refactor.

6. Performance Agent (/codeprobe performance)

Hunts for N+1 query problems, memory leaks, unnecessary re-renders in React components, unoptimized database queries, and missing indexes. Performance issues are notoriously hard to spot in code review because they only surface under load. This agent flags the patterns that cause them.

7. Error Handling Agent (/codeprobe errors)

Swallowed exceptions. Bare catch blocks that log nothing. Missing error boundaries in React. Inconsistent error response formats across API endpoints. This agent enforces the error handling discipline that most teams aspire to but rarely maintain under deadline pressure.

8. Test Quality Agent (/codeprobe tests)

Goes beyond coverage percentages. It checks for brittle assertions tied to implementation details, missing edge case tests, test files that haven’t been updated after significant code changes, and test doubles that drift from the real implementations they mock.

9. Framework Agent (/codeprobe framework)

Loads stack-specific anti-patterns based on auto-detected frameworks. For Laravel: are you using $request->all() in mass assignment? For Next.js: are you fetching data client-side when server components would eliminate the waterfall? For Django: are you running raw SQL when the ORM handles it safely?

Six dedicated reference guides power this agent — covering PHP/Laravel, JavaScript/TypeScript, React/Next.js, Python, SQL/Database, and API design.

Across all nine agents, CodeProbe produces findings with a consistent structure: ID, severity, file location with line numbers, problem description, code evidence, suggestion, and a fix prompt. That uniformity matters. You’re not deciphering different output formats across tools — it’s one workflow for everything.

framework best practices

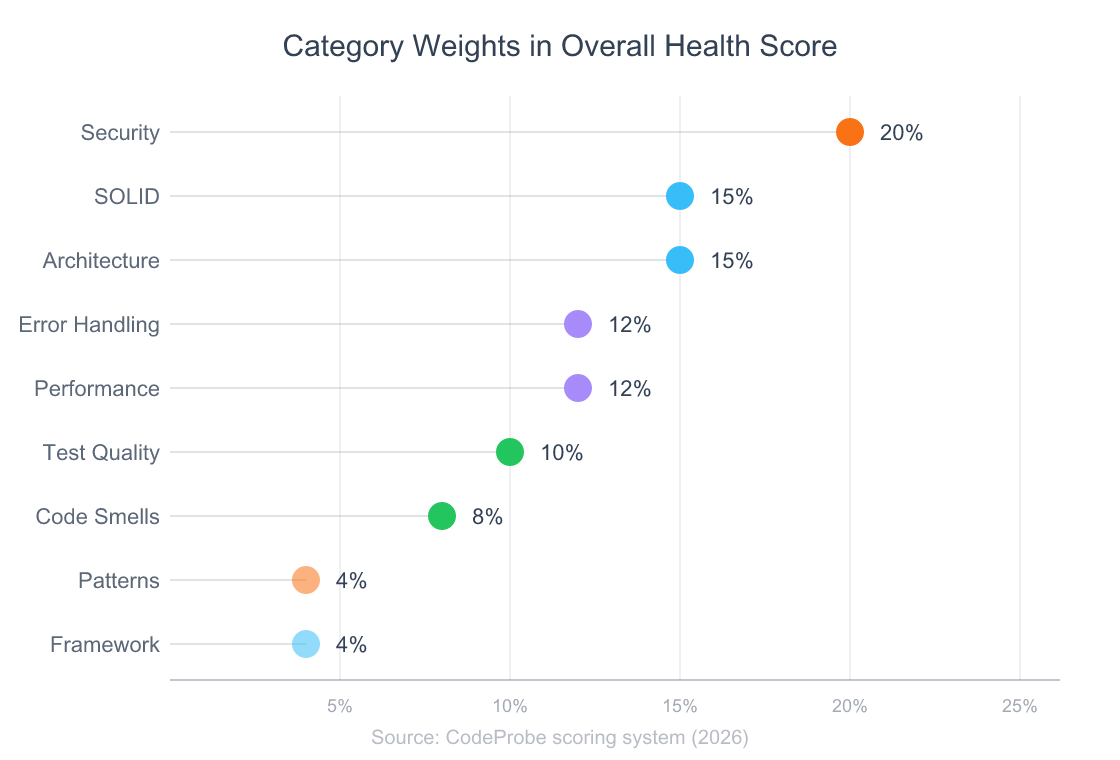

How Does the Scoring System Work?

A weighted scoring system calculates your codebase health from findings across all nine agents. Each category starts at 100, and findings deduct points based on severity: critical issues subtract 25, major issues subtract 10, minor issues subtract 3. Suggestions don’t count against you.

The overall health score is a weighted average:

| Category | Weight |

|—|—|

| Security | 20% |

| SOLID Principles | 15% |

| Architecture | 15% |

| Error Handling | 12% |

| Performance | 12% |

| Test Quality | 10% |

| Code Smells | 8% |

| Design Patterns | 4% |

| Framework | 4% |

Security carries the heaviest weight at 20% because a single critical vulnerability can compromise your entire application. SOLID and architecture share second place at 15% each — they’re the leading indicators of long-term maintainability.

Three health tiers classify your result:

- 80–100 (Healthy): Your codebase is in good shape. Address minor findings at your pace.

- 60–79 (Needs Attention): Structural issues are accumulating. Prioritize major findings before adding new features.

- 0–59 (Critical): Significant problems need immediate attention. The refactoring roadmap in the audit output will help you prioritize.

The /codeprobe health command gives you a dashboard view with these scores alongside file statistics — total lines of code, file counts, method counts, and test-to-code ratios.

monitoring and improving code quality metrics over time

How Do You Run Your First Audit?

Stack Overflow’s 2026 Developer Survey showed that daily AI users merge approximately 60% more PRs than non-users (Stack Overflow, 2026). Getting started with CodeProbe takes one command.

From building to daily use: I built CodeProbe because I was tired of reviewing my own code across multiple projects and missing the same categories of issues. The first time I ran a full audit on a Laravel codebase I’d been working on for months, the security agent found three SQL injection vectors I’d walked past in every review. That’s when I knew the tool was worth open-sourcing.

One-Command Install (macOS/Linux)

curl -fsSL https://raw.githubusercontent.com/nishilbhave/codeprobe-claude/main/install.sh | bash

Manual Install

git clone https://github.com/nishilbhave/codeprobe-claude.git

cd codeprobe-claude

./install.sh

Windows (Git Bash)

Requires Git for Windows which includes Git Bash.

# Option 1: One-command install (run from Git Bash, not PowerShell/CMD)

curl -fsSL https://raw.githubusercontent.com/nishilbhave/codeprobe-claude/main/install-win.sh | bash

# Option 2: Manual install

git clone https://github.com/nishilbhave/codeprobe-claude.git

cd codeprobe-claude

./install-win.sh

The install script symlinks skill directories into ~/.claude/skills/ and won’t overwrite existing skills without asking. Python 3.8+ is optional — it enables the /codeprobe health statistics dashboard.

Run your first audit:

/codeprobe audit .

That’s it. The orchestrator detects your stack, loads the right reference guides, and dispatches all nine agents. You get a full report with severity-scored findings, a refactoring roadmap, and fix prompts for every issue.

Other commands worth knowing:

| Command | What it does |

|—|—|

| /codeprobe quick . | Top 5 most impactful issues with fix prompts |

| /codeprobe security . | Security-only scan |

| /codeprobe solid . | SOLID principles-only scan |

| /codeprobe health . | Codebase vitals dashboard with scores |

| /codeprobe architecture . | Architecture and dependency analysis |

| /codeprobe smells . | Code smell detection |

Customize thresholds by adding a .codeprobe-config.json to your project root:

{

"severity_overrides": {

"long_method_loc": 50,

"large_class_loc": 500,

"deep_nesting_max": 4

},

"skip_categories": ["codeprobe-testing"],

"skip_rules": ["SPEC-GEN-001"]

}

Every team has different tolerances. Maybe your team is fine with longer methods in data transformation pipelines. Maybe you want to skip test quality checks until you’ve addressed security findings first. The config file makes it your tool, not mine.

getting started with Claude Code and custom skills

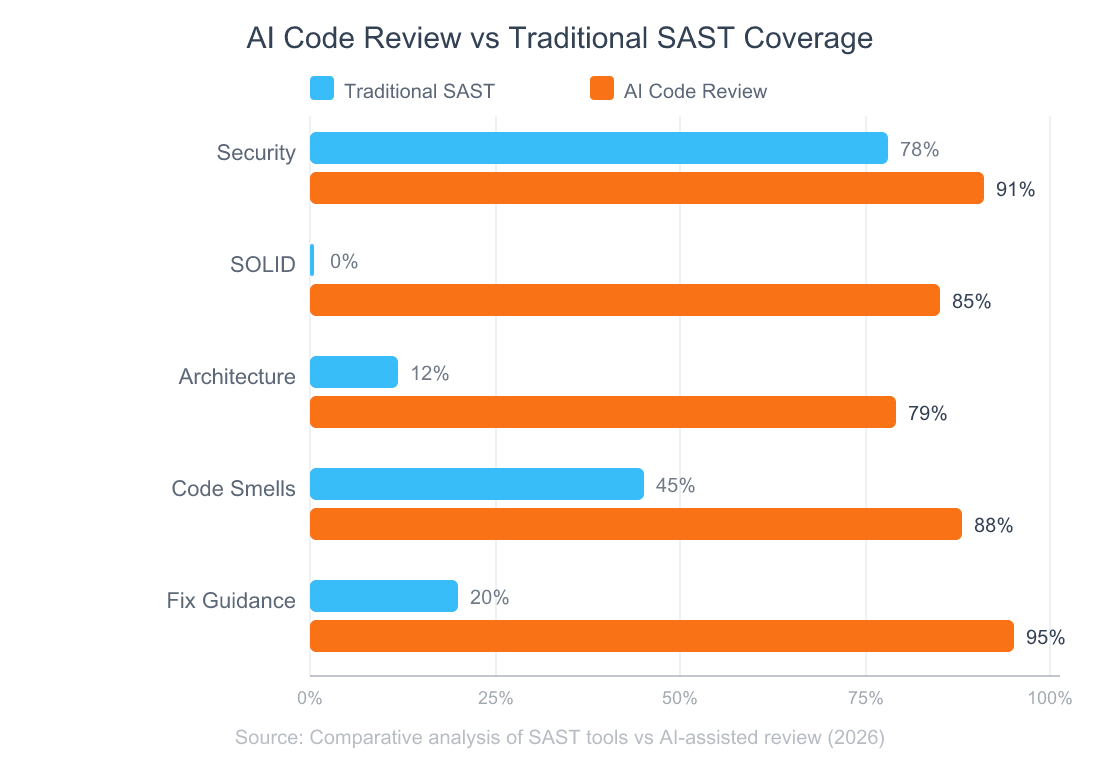

What Makes This Different from Traditional SAST Tools?

Repositories with AI-assisted review showed 28% fewer post-merge defects compared to human-only review (GitHub Octoverse, 2026). But CodeProbe isn’t just “AI review.” It’s a fundamentally different approach from traditional static analysis.

Traditional SAST tools (SonarQube, ESLint, Semgrep) use pattern matching. They’re fast and deterministic — but even the best ones achieve under 25% recall, meaning most vulnerabilities go undetected (ACM EASE 2026). And they can’t reason about intent. They don’t understand that your UserService class violates SRP because it handles authentication, profile updates, and email notifications — that requires understanding what the code is doing, not just how it’s structured.

Pattern matching finds syntax problems. Contextual reasoning finds design problems. SAST tools excel at catching

eval()calls and missing input validation. CodeProbe excels at catching the architectural decay and principle violations that SAST tools are structurally incapable of detecting. The best setup uses both: SAST in CI for fast pattern checks, CodeProbe for the deeper analysis that needs reasoning.

Here’s what that difference looks like in practice:

| Capability | Traditional SAST | CodeProbe |

|—|—|—|

| SQL injection detection | Pattern-based regex | Contextual data flow analysis |

| SOLID violations | Not supported | All 5 principles |

| Architecture analysis | Limited (import graphs) | Layer violations, god objects, circular deps |

| Fix guidance | Error codes + docs links | Copy-pasteable fix prompts |

| Stack awareness | Config-per-language | Auto-detection + reference guides |

| Code smells | Basic complexity metrics | 6 categories with configurable thresholds |

CodeProbe doesn’t replace your linter. It covers the ground your linter can’t reach.

choosing the right developer tools

Frequently Asked Questions

Does CodeProbe modify my code?

No. It’s strictly read-only. The tool never writes, edits, or deletes any file in your project. Every finding includes a fix prompt you can choose to run — but the tool itself only reads and reports. This was a deliberate design decision: audit tools shouldn’t have write access. Over 1.3 million repos now use AI code review integrations (GitHub Octoverse, 2026), and read-only operation is a baseline trust requirement.

What programming languages does it support?

Auto-detection covers Python, JavaScript, TypeScript, React/Next.js, PHP/Laravel, SQL, and API design with dedicated reference guides. File statistics support extends to Java, Ruby, Go, Rust, Vue, Svelte, Shell, CSS/SCSS, and HTML. The JetBrains 2026 survey showed web developers (52%) and DevOps engineers (49%) have the highest AI code review adoption (JetBrains, 2026) — and those are exactly the stacks CodeProbe covers best.

Can I customize the severity thresholds?

Yes. Drop a .codeprobe-config.json in your project root to override thresholds for method length, class size, nesting depth, and constructor dependencies. You can also skip entire categories or individual rules. The tool respects that different teams and projects have different standards.

How does it compare to GitHub Copilot code review?

Copilot’s code review focuses on inline suggestions during PR review. CodeProbe runs a comprehensive audit across 9 dimensions with a scoring system, refactoring roadmap, and fix prompts. Repositories using AI-assisted review show 32% faster merge times (GitHub Octoverse, 2026). They’re complementary: Copilot for real-time PR feedback, CodeProbe for deep periodic audits.

Does it work outside Claude Code?

In degraded mode, yes. On Claude.ai without filesystem access, you can paste or upload code and get findings with the same scoring. You’ll miss codebase-wide statistics and cross-file analysis, but individual file review works fully. The tool notes the limitation and adjusts its output accordingly.

What’s Next?

Manual code review isn’t going away. But it shouldn’t be the only line of defense for code quality, security, and architecture. Not when developers already spend 6.4 hours a week on reviews and still miss the problems that cost the most to fix.

CodeProbe gives you nine specialized agents that systematically cover what human reviewers can’t:

- Security — SQL injection, XSS, hardcoded secrets, auth flaws

- SOLID principles — SRP, OCP, LSP, ISP, DIP violations

- Architecture — Circular dependencies, layer violations, god objects

- Code smells — Bloaters, dead code, deep nesting, duplication

- Performance — N+1 queries, memory leaks, rendering issues

- Error handling, test quality, design patterns, framework best practices

Every finding is severity-scored. Every finding has a fix prompt. The tool is open-source, MIT-licensed, and installs in one command.

Try it:

curl -fsSL https://raw.githubusercontent.com/nishilbhave/codeprobe-claude/main/install.sh | bash

Then run /codeprobe audit . in your project and see what it finds. The results might surprise you — they surprised me.

open-source AI development tools

I Built a Multi-Agent Code Review Skill for Claude Code — Here’s How It Works

Why Vibe Coding Will Replace Traditional Programming

The End of User Interfaces: How AI Agents Will Kill the Dashboard